This is the multi-page printable view of this section.

Click here to print.

Return to the regular view of this page.

Accessing Vertica

The following table shows which client drivers you have to set up to access Vertica with a supported programming language:.

The following table shows which client drivers you have to set up to access Vertica with a supported programming language:

1 - C/C++

You must install the ODBC client driver before creating C/C++ client applications.

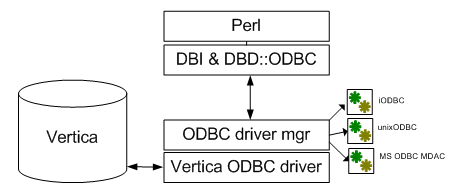

Vertica provides an Open Database Connectivity (ODBC) driver that allows applications to connect to the Vertica database. This driver can be used by custom-written client applications that use the ODBC API to interact with Vertica. ODBC is also used by many third-party applications to connect to Vertica, including business intelligence applications and extract, transform, and load (ETL) applications.

This section details the process for configuring the Vertica ODBC driver. It also explains how to use the ODBC API to connect to Vertica in your own client applications.

While client applications written in C, C++, Perl, PHP, etc. all use the ODBC client driver to connect to Vertica, this section only concerns C and C++ applications.

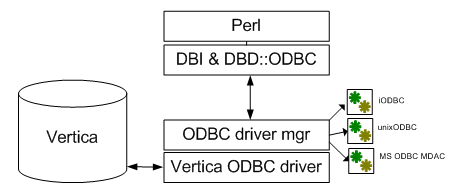

1.1 - ODBC architecture

The ODBC architecture has four layers:.

The ODBC architecture has four layers:

-

Client Application

Is an application that opens a data source through a Data Source Name (DSN). It then sends requests to the data source, and receives the results of those requests. Requests are made in the form of calls to ODBC functions.

-

Driver Manager

Is a library on the client system that acts as an intermediary between a client application and one or more drivers. The driver manager:

-

Resolves the DSN provided by the client application.

-

Loads the driver required to access the specific database defined within the DSN.

-

Processes ODBC function calls from the client or passing them to the driver.

-

Retrieves results from the driver.

-

Unloads drivers when they are no longer needed.

On Windows and macOS client systems, the driver manager is provided by the operating system. On Linux systems, you usually need to install a driver manager. See Client drivers support for a list of driver managers that can be used with Vertica on your client platform.

-

Driver

A library on the client system that provides access to a specific database. It translates requests into the format expected by the database, and translates results back into the format required by the client application.

-

Database

The database processes requests initiated at the client application and returns results.

1.2 - ODBC feature support

The ODBC driver for Vertica supports the most of the features defined in the Microsoft ODBC 3.5 specifications.

The ODBC driver for Vertica supports the most of the features defined in the Microsoft ODBC 3.5 specifications. The following features are not supported:

-

Updatable result sets

-

Backwards scrolling cursors

-

Cursor attributes

-

More than one open statement per connection. Simultaneously executing statements must each belong to a different connection. For example, you cannot execute a new statement while another statement has a result set open. To execute another statement with the same connection/session, wait for the current statement to finish executing and close its result set, then execute the new statement.

-

Keysets

-

Bookmarks

The Vertica ODBC driver accurately reports its capabilities. If you need to determine whether it complies with a specific feature, you should query the driver's capabilities directly using the SQLGetInfo() function.

1.3 - Vertica and ODBC data type translation

Most data types are transparently converted between Vertica and ODBC.

Most data types are transparently converted between Vertica and ODBC. This section explains several data types require special handling.

|

Vertica Data Types |

C Data Type |

ODBC C Typedef |

C Type Identifier |

BINARY, VARBINARY |

char[] |

SQL_BINARY |

SQL_C_BINARY |

LONG VARBINARY |

char[] |

SQL_LONGVARBINARY |

SQL_C_BINARY |

BOOLEAN |

SQLSMALLINT |

SQL_SMALLINT |

SQL_C_SSHORT |

CHAR, VARCHAR |

char[] |

SQL_CHAR |

SQL_C_CHAR |

LONG VARCHAR |

char[] |

SQL_LONGVARCHAR |

SQL_C_CHAR |

DATE |

SQL_DATE_STRUCT |

SQL_TYPE_DATE |

SQL_C_TYPE_DATE |

TIME |

SQL_TIME_STRUCT |

SQL_TYPE_TIME |

SQL_C_TYPE_TIME |

TIMESTAMP |

SQL_TIMESTAMP_STRUCT |

SQL_TYPE_TIMESTAMP |

SQL_C_TYPE_TIMESTAMP |

INTERVAL |

SQL_INTERVAL_STRUCT |

SQL_INTERVAL_DAY_TO_SECOND |

SQL_C_INTERVAL_DAY_TO_SECOND |

INTERVAL DAY TO SECOND |

SQL_INTERVAL_STRUCT |

SQL_INTERVAL_DAY_TO_SECOND |

SQL_C_INTERVAL_DAY_TO_SECOND |

INTERVAL YEAR TO MONTH |

SQL_INTERVAL_STRUCT |

SQL_INTERVAL_YEAR_TO_MONTH |

SQL_C_INTERVAL_YEAR_TO_MONTH |

DOUBLE PRECISION

FLOAT |

SQLREAL |

SQL_REAL |

SQL_C_FLOAT |

INTEGER, BIGINT, SMALLINT |

SQLBIGINT |

SQL_BIGINT |

SQL_C_SBIGINT |

NUMERIC, DECIMAL, NUMBER, MONEY |

SQL_NUMERIC_STRUCT |

SQL_NUMERIC |

SQL_C_NUMERIC |

GEOMETRY |

char[] |

SQL_LONGVARBINARY |

SQL_C_CHAR |

GEOGRAPHY |

char[] |

SQL_LONGVARBINARY |

SQL_C_CHAR |

UUID |

SQLGUID (see note below) |

SQL_GUID |

SQL_C_GUID |

Notes

-

The GEOMETRY and GEOGRAPHY data types are treated as LONG VARCHAR data by the ODBC driver.

-

Vertica supports the standard interval data types supported by ODBC. See Interval Data Types in Microsoft's ODBC reference.

-

Vertica version 9.0.0 introduced the UUID data type, including JDBC support for UUIDs. The Vertica ADO.NET, ODBC, and OLE DB clients added full support for UUIDs in version 9.0.1. Vertica maintains backwards compatibility with older supported client driver versions that do not support the UUID data type, as follows:

|

When an older client... |

Vertica... |

|

Queries tables with UUID columns |

Translates the native UUID values to CHAR values. |

|

Inserts data into a UUID column |

Converts the CHAR value sent by the client into a native UUID value. |

|

Queries a UUID column's metadata |

Reports its data type as CHAR. |

See also

1.4 - ODBC header file

The Vertica ODBC driver provides a C header file named odbc.h that defines several useful constants that you can use in your applications.

The Vertica ODBC driver provides a C header file named

verticaodbc.h that defines several useful constants that you can use in your applications. These constants let you access and alter settings specific to Vertica.

This file's location depends on your client operating system:

The constants defined in this file are listed below.

|

Parameter |

Description |

SQL_ATTR_VERTICA_RESULT_BUFFER_SIZE |

Sets the size of the buffer used when retrieving results from the server.

Associated functions:

SQLSetConnectAttr()

SQLGetConnectAttr()

|

SQL_ATTR_VERTICA_DIRECT_BATCH_INSERT |

Deprecated, always set to 1.

Associated functions:

SQLSetConnectAttr()

SQLSetStmtAttr()

SQLGetConnectAttr()

SQLGetStmtAttr()

|

SQL_ATTR_VERTICA_LOCALE |

Changes the locale from en_US@collation=binary to the ICU locale specified. See Setting the locale and encoding for ODBC sessions for an example of using this parameter.

Associated functions:

SQLSetConnectAttr()

SQLGetConnectAttr()

|

1.5 - Connecting to the database

The first step in any ODBC application is to connect to the database.

The first step in any ODBC application is to connect to the database. When you create the connection to a data source using ODBC, you use the name of the DSN that contains the details of the driver to use, the database host, and other basic information about connecting to the data source.

There are 4 steps your application needs to take to connect to a database:

-

Call SQLAllocHandle() to allocate a handle for the ODBC environment. This handle is used to create connection objects and to set application-wide settings.

-

Use the environment handle to set the version of ODBC that your application wants to use. This ensures that the data source knows which API your application will use to interact with it.

-

Allocate a database connection handle by calling SQLAllocHandle(). This handle represents a connection to a specific data source.

-

Use the SQLConnect() or SQLDriverConnect() functions to open the connection to the database.

Note

If you specify a locale either in the connection string or in the DSN, the call to the connection function returns SQL_SUCCESS_WITH_INFO on a successful connection, with messages about the state of the locale.

When creating the connection to the database, use SQLConnect() when the only options you need to set at connection time is the username and password. Use SQLDriverConnect() when you want to change connection options, such as the locale.

The following example demonstrates connecting to a database using a DSN named ExampleDB. After it creates the connection successfully, this example simply closes it.

// Demonstrate connecting to Vertica using ODBC.

// Standard i/o library

#include <stdio.h>

#include <stdlib.h>

// Only needed for Windows clients

// #include <windows.h>

// SQL include files that define data types and ODBC API

// functions

#include <sql.h>

#include <sqlext.h>

#include <sqltypes.h>

int main()

{

SQLRETURN ret; // Stores return value from ODBC API calls

SQLHENV hdlEnv; // Handle for the SQL environment object

// Allocate an a SQL environment object

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate a handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated an environment handle.\n");

}

// Set the ODBC version we are going to use to

// 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not set application version to ODBC 3.\n");

exit(EXIT_FAILURE);

} else {

printf("Set application version to ODBC 3.\n");

}

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate database handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated Database handle.\n");

}

// Connect to the database using

// SQL Connect

printf("Connecting to database.\n");

const char *dsnName = "ExampleDB";

const char* userID = "ExampleUser";

const char* passwd = "password123";

ret = SQLConnect(hdlDbc, (SQLCHAR*)dsnName,

SQL_NTS,(SQLCHAR*)userID,SQL_NTS,

(SQLCHAR*)passwd, SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not connect to database.\n");

exit(EXIT_FAILURE);

} else {

printf("Connected to database.\n");

}

// We're connected. You can do real

// work here

// When done, free all of the handles to close them

// in an orderly fashion.

printf("Disconnecting and freeing handles.\n");

ret = SQLDisconnect( hdlDbc );

if(!SQL_SUCCEEDED(ret)) {

printf("Error disconnecting from database. Transaction still open?\n");

exit(EXIT_FAILURE);

}

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_SUCCESS);

}

Running the above code prints the following:

Allocated an environment handle.

Set application version to ODBC 3.

Allocated Database handle.

Connecting to database.

Connected to database.

Disconnecting and freeing handles.

See Setting the locale and encoding for ODBC sessions for an example of using SQLDriverConnect to connect to the database.

Notes

-

If you use the DataDirect® driver manager, you should always use the SQL_DRIVER_NOPROMPT value for the SQLDriverConnect function's DriverCompletion parameter (the final parameter in the function call) when connecting to Vertica. Vertica's ODBC driver on Linux and UNIX platforms does not contain a UI, and therefore cannot prompt users for a password.

-

On Windows client platforms, the ODBC driver can prompt users for connection information. See Prompting windows users for missing connection properties for more information.

-

If your database does not comply with your Vertica license agreement, your application receives a warning message in the return value of the SQLConnect() function. Always have your application examine this return value to see if it is SQL_SUCCESS_WITH_INFO. If it is, have your application extract and display the message to the user.

1.6 - Load balancing

To enable native load balancing on your client, set the ConnectionLoadBalance connection parameter to true either in the DSN entry or in the connection string.

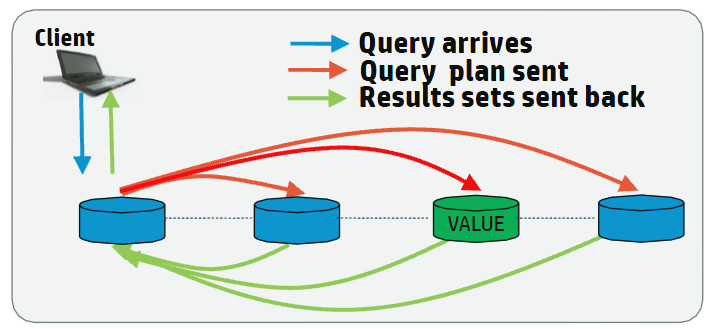

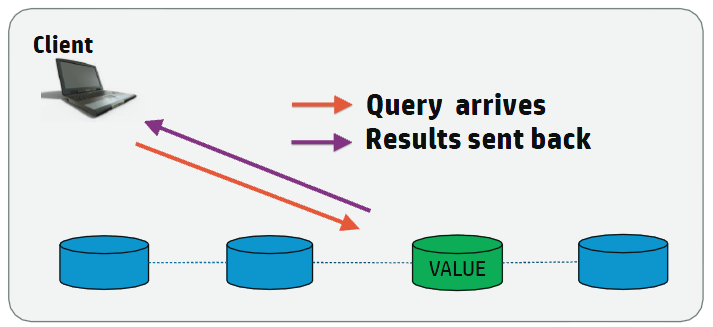

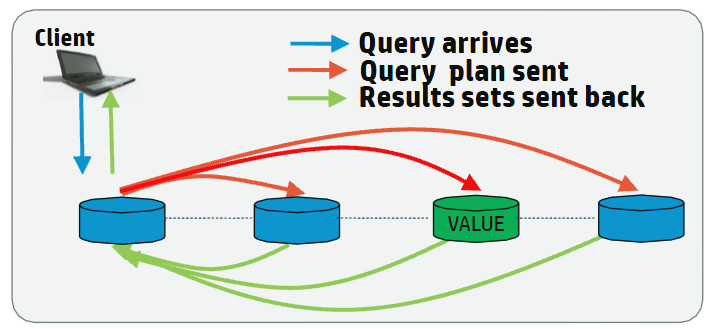

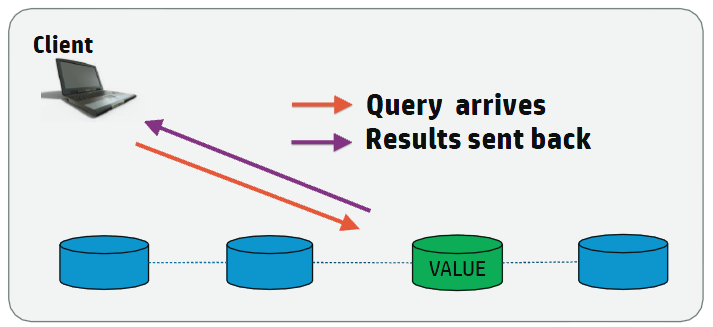

Native connection load balancing

Native connection load balancing helps spread the overhead caused by client connections on the hosts in the Vertica database. Both the server and the client must enable native connection load balancing. If enabled by both, then when the client initially connects to a host in the database, the host picks a host to handle the client connection from a list of the currently up hosts in the database, and informs the client which host it has chosen.

If the initially-contacted host does not choose itself to handle the connection, the client disconnects, then opens a second connection to the host selected by the first host. The connection process to this second host proceeds as usual—if SSL is enabled, then SSL negotiations begin, otherwise the client begins the authentication process. See About native connection load balancing for details.

To enable native load balancing on your client, set the ConnectionLoadBalance connection parameter to true either in the DSN entry or in the connection string. The following example demonstrates connecting to the database several times with native connection load balancing enabled, and fetching the name of the node handling the connection from the V_MONITOR.CURRENT_SESSION system table.

// Demonstrate enabling native load connection balancing.

// Standard i/o library

#include <stdlib.h>

#include <iostream>

#include <assert.h>

// Only needed for Windows clients

// #include <windows.h>

// SQL include files that define data types and ODBC API

// functions

#include <sql.h>

#include <sqlext.h>

#include <sqltypes.h>

using namespace std;

int main()

{

SQLRETURN ret; // Stores return value from ODBC API calls

SQLHENV hdlEnv; // Handle for the SQL environment object

// Allocate an a SQL environment object

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

assert(SQL_SUCCEEDED(ret));

// Set the ODBC version we are going to use to

// 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

assert(SQL_SUCCEEDED(ret));

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

assert(SQL_SUCCEEDED(ret));

// Connect four times. If load balancing is on, client should

// connect to different nodes.

for (int x=1; x <= 4; x++) {

// Connect to the database using SQLDriverConnect. Set

// ConnectionLoadBalance to 1 (true) to enable load

// balancing.

cout << endl << "Connection attempt #" << x << "... ";

const char *connStr = "DSN=VMart;ConnectionLoadBalance=1;"

"UID=ExampleUser;PWD=password123";

ret = SQLDriverConnect(hdlDbc, NULL, (SQLCHAR*)connStr, SQL_NTS,

NULL, 0, NULL, SQL_DRIVER_NOPROMPT );

if(!SQL_SUCCEEDED(ret)) {

cout << "failed. Exiting." << endl;

exit(EXIT_FAILURE);

} else {

cout << "succeeded" << endl;

}

// We're connected. Query the v_monitor.current_session table to

// find the name of the node we've connected to.

// Set up a statement handle

SQLHSTMT hdlStmt;

SQLAllocHandle(SQL_HANDLE_STMT, hdlDbc, &hdlStmt);

assert(SQL_SUCCEEDED(ret));

ret = SQLExecDirect( hdlStmt, (SQLCHAR*)"SELECT node_name FROM "

"V_MONITOR.CURRENT_SESSION;", SQL_NTS );

if(SQL_SUCCEEDED(ret)) {

// Bind varible to column in result set.

SQLTCHAR node_name[256];

ret = SQLBindCol(hdlStmt, 1, SQL_C_TCHAR, (SQLPOINTER)node_name,

sizeof(node_name), NULL);

while(SQL_SUCCEEDED(ret = SQLFetchScroll(hdlStmt, SQL_FETCH_NEXT,1))) {

// Print the bound variables, which now contain the values from the

// fetched row.

cout << "Connected to node " << node_name << endl;

}

}

// Free statement handle

SQLFreeHandle(SQL_HANDLE_STMT,hdlStmt);

cout << "Disconnecting." << endl;

ret = SQLDisconnect( hdlDbc );

assert(SQL_SUCCEEDED(ret));

}

// When done, free all of the handles to close them

// in an orderly fashion.

cout << endl << "Freeing handles..." << endl;

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

cout << "Done!" << endl;

exit(EXIT_SUCCESS);

}

Running the above example produces output similar to the following:

Connection attempt #1... succeeded

Connected to node v_vmart_node0001

Disconnecting.

Connection attempt #2... succeeded

Connected to node v_vmart_node0002

Disconnecting.

Connection attempt #3... succeeded

Connected to node v_vmart_node0003

Disconnecting.

Connection attempt #4... succeeded

Connected to node v_vmart_node0001

Disconnecting.

Freeing handles...

Done!

Hostname-based load balancing

You can also balance workloads by resolving a single hostname to multiple IP addresses. The ODBC client driver load balances by automatically resolving the hostname to one of the specified IP addresses at random.

For example, suppose the hostname verticahost.example.com has the following entries in etc/hosts:

192.0.2.0 verticahost.example.com

192.0.2.1 verticahost.example.com

192.0.2.2 verticahost.example.com

Specifying the hostname verticahost.example.com randomly resolves to one of the listed IP addresses.

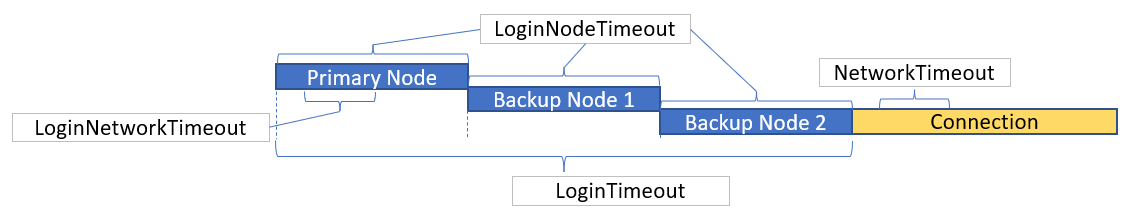

1.7 - Connection failover

When run, the example's output on the system console is similar to the following:.

If a client application attempts to connect to a host in the Vertica cluster that is down, the connection attempt fails when using the default connection configuration. This failure usually returns an error to the user. The user must either wait until the host recovers and retry the connection or manually edit the connection settings to choose another host.

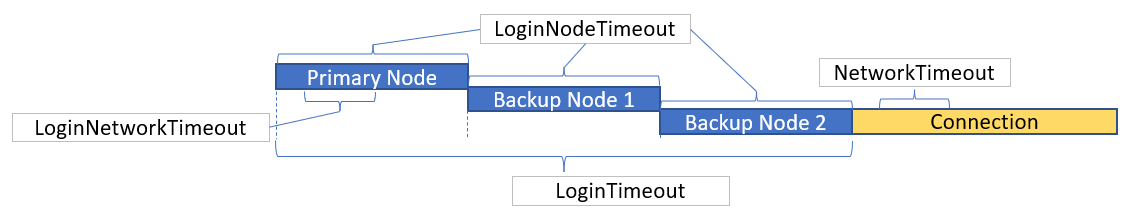

Due to Vertica Analytic Database's distributed architecture, you usually do not care which database host handles a client application's connection. You can use the client driver's connection failover feature to prevent the user from getting connection errors when the host specified in the connection settings is unreachable. The JDBC driver gives you several ways to let the client driver automatically attempt to connect to a different host if the one specified in the connection parameters is unreachable:

-

Configure your DNS server to return multiple IP addresses for a host name. When you use this host name in the connection settings, the client attempts to connect to the first IP address from the DNS lookup. If the host at that IP address is unreachable, the client tries to connect to the second IP, and so on until it either manages to connect to a host or it runs out of IP addresses.

-

Supply a list of backup hosts for the client driver to try if the primary host you specify in the connection parameters is unreachable.

-

(JDBC only) Use driver-specific connection properties to manage timeouts before attempting to connect to the next node.

For all methods, the process of failover is transparent to the client application (other than specifying the list of backup hosts, if you choose to use the list method of failover). If the primary host is unreachable, the client driver automatically tries to connect to other hosts.

Failover only applies to the initial establishment of the client connection. If the connection breaks, the driver does not automatically try to reconnect to another host in the database.

Choosing a failover method

You usually choose to use one of the two failover methods. However, they do work together. If your DNS server returns multiple IP addresses and you supply a list of backup hosts, the client first tries all of the IPs returned by the DNS server, then the hosts in the backup list.

Note

If a host name in the backup host list resolves to multiple IP addresses, the client does not try all of them. It just tries the first IP address in the list.

The DNS method of failover centralizes the configuration client failover. As you add new nodes to your Vertica Analytic Database cluster, you can choose to add them to the failover list by editing the DNS server settings. All client systems that use the DNS server to connect to Vertica Analytic Database automatically use connection failover without having to change any settings. However, this method does require administrative access to the DNS server that all clients use to connect to the Vertica Analytic Database cluster. This may not be possible in your organization.

Using the backup server list is easier than editing the DNS server settings. However, it decentralizes the failover feature. You may need to update the application settings on each client system if you make changes to your Vertica Analytic Database cluster.

Using DNS failover

To use DNS failover, you need to change your DNS server's settings to map a single host name to multiple IP addresses of hosts in your Vertica Analytic Database cluster. You then have all client applications use this host name to connect to Vertica Analytic Database.

You can choose to have your DNS server return as many IP addresses for the host name as you want. In smaller clusters, you may choose to have it return the IP addresses of all of the hosts in your cluster. However, for larger clusters, you should consider choosing a subset of the hosts to return. Otherwise there can be a long delay as the client driver tries unsuccessfully to connect to each host in a database that is down.

Using the backup host list

To enable backup list-based connection failover, your client application has to specify at least one IP address or host name of a host in the BackupServerNode parameter. The host name or IP can optionally be followed by a colon and a port number. If not supplied, the driver defaults to the standard Vertica port number (5433). To list multiple hosts, separate them by a comma.

The following example demonstrates setting the BackupServerNode connection parameter to specify additional hosts for the connection attempt. The connection string intentionally has a non-existent node, so that the initial connection fails. The client driver has to resort to trying the backup hosts to establish a connection to Vertica.

// Demonstrate using connection failover.

// Standard i/o library

#include <stdlib.h>

#include <iostream>

#include <assert.h>

// Only needed for Windows clients

// #include <windows.hgt;

// SQL include files that define data types and ODBC API

// functions

#include <sql.h>

#include <sqlext.h>

#include <sqltypes.h>

using namespace std;

int main()

{

SQLRETURN ret; // Stores return value from ODBC API calls

SQLHENV hdlEnv; // Handle for the SQL environment object

// Allocate an a SQL environment object

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

assert(SQL_SUCCEEDED(ret));

// Set the ODBC version we are going to use to

// 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

assert(SQL_SUCCEEDED(ret));

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

assert(SQL_SUCCEEDED(ret));

/* DSN for this connection specifies a bad node, and good backup nodes:

[VMartBadNode]

Description=VMart Vertica Database

Driver=/opt/vertica/lib64/libverticaodbc.so

Database=VMart

Servername=badnode.example.com

BackupServerNode=v_vmart_node0002.example.com,v_vmart_node0003.example.com

*/

// Connect to the database using SQLConnect

cout << "Connecting to database." << endl;

const char *dsnName = "VMartBadNode"; // Name of the DSN

const char* userID = "ExampleUser"; // Username

const char* passwd = "password123"; // password

ret = SQLConnect(hdlDbc, (SQLCHAR*)dsnName,

SQL_NTS,(SQLCHAR*)userID,SQL_NTS,

(SQLCHAR*)passwd, SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

cout << "Could not connect to database." << endl;

exit(EXIT_FAILURE);

} else {

cout << "Connected to database." << endl;

}

// We're connected. Query the v_monitor.current_session table to

// find the name of the node we've connected to.

// Set up a statement handle

SQLHSTMT hdlStmt;

SQLAllocHandle(SQL_HANDLE_STMT, hdlDbc, &hdlStmt);

assert(SQL_SUCCEEDED(ret));

ret = SQLExecDirect( hdlStmt, (SQLCHAR*)"SELECT node_name FROM "

"v_monitor.current_session;", SQL_NTS );

if(SQL_SUCCEEDED(ret)) {

// Bind varible to column in result set.

SQLTCHAR node_name[256];

ret = SQLBindCol(hdlStmt, 1, SQL_C_TCHAR, (SQLPOINTER)node_name,

sizeof(node_name), NULL);

while(SQL_SUCCEEDED(ret = SQLFetchScroll(hdlStmt, SQL_FETCH_NEXT,1))) {

// Print the bound variables, which now contain the values from the

// fetched row.

cout << "Connected to node " << node_name << endl;

}

}

cout << "Disconnecting." << endl;

ret = SQLDisconnect( hdlDbc );

assert(SQL_SUCCEEDED(ret));

// When done, free all of the handles to close them

// in an orderly fashion.

cout << endl << "Freeing handles..." << endl;

SQLFreeHandle(SQL_HANDLE_STMT,hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

cout << "Done!" << endl;

exit(EXIT_SUCCESS);

}

When run, the example's output on the system console is similar to the following:

Connecting to database.

Connected to database.

Connected to node v_vmart_node0002

Disconnecting.

Freeing handles...

Done!

Notice that the connection was made to the first node in the backup list (node 2).

Note

When native connection load balancing is enabled, the additional servers specified in the BackupServerNode connection parameter are only used for the initial connection to a Vertica host. If host redirects the client to another host in the database cluster to handle its connection request, the second connection does not use the backup node list. This is rarely an issue, since native connection load balancing is aware of which nodes are currently up in the database. See

Load balancing for more information.

1.8 - Prompting windows users for missing connection properties

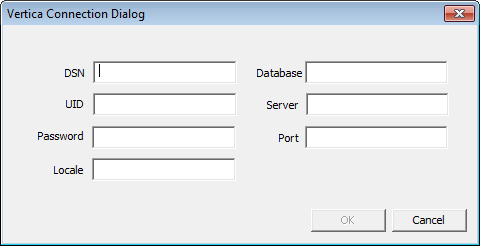

The Vertica Windows ODBC driver can prompt the user for connection information if required information is missing.

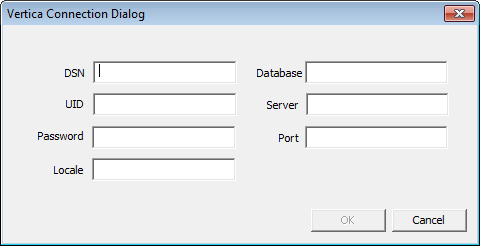

The Vertica Windows ODBC driver can prompt the user for connection information if required information is missing. The driver displays the Vertica Connection Dialog if the client application calls SQLDriverConnect to connect to Vertica and either of the following is true:

-

The DriverCompletion property is set to SQL_DRIVER_PROMPT.

-

The DriverCompletion property is set to SQL_DRIVER_COMPLETE or SQL_DRIVER_COMPLETE_REQUIRED and the connection string or DSN being used to connect is missing the server, database, or port information.

If either of the above conditions are true, the driver displays a Vertica Connection Dialog to the user to prompt for connection information.

The dialog has all of the property values supplied in the connection string or DSN filled in.

Note

Your connection string at least needs to specify Vertica as the driver, otherwise Windows will not know to use the Vertica ODBC driver to try to open the connection.

The required fields on the connection dialog are Database, UID, Server, and Port. Once these are filled in, the form enables the OK button.

If the user clicks Cancel on the dialog, the SQLDriverConnect function call returns SQL_NO_DATA immediately, without attempting to connect to Vertica. If the user supplies incomplete or incorrect information for the connection, the connection function returns SQL_ERROR after the connection attempt fails.

Note

If the DriverCompletion property of the SQLDriverConnect function call is SQL_DRIVER_NOPROMPT, the ODBC driver immediately returns a SQL_ERROR indicating that it cannot connect because not enough information has been supplied and the driver is not allowed to prompt the user for the missing information.

1.9 - Prompting windows users for passwords

If the connection string or DSN supplied to the SQLDriverConnect function that client applications call to connect to Vertica lacks any of the required connection properties needed to connect, the Vertica's Windows ODBC driver opens a dialog box to prompt the user to enter the missing information (see Prompting Windows Users for Missing Connection Parameters).

If the connection string or DSN supplied to the SQLDriverConnect function that client applications call to connect to Vertica lacks any of the required connection properties needed to connect, the Vertica's Windows ODBC driver opens a dialog box to prompt the user to enter the missing information (see Prompting windows users for missing connection properties). The user's password is not normally considered a required connection property because Vertica user accounts may not have a password. If the password property is missing, the ODBC driver still tries to connect to Vertica without supplying a password.

You can use the PromptOnNoPassword DSN parameter to force ODBC driver to treat the password as a required connection property. This parameter is useful if you do not want to store passwords in DSN entries. Passwords saved in DSN entries are insecure, since they are stored as clear text in the Windows registry and therefore visible to other users on the same system.

There are two other factors which also decide whether the ODBC driver displays the Vertica Connection Dialog. These are (in order of priority):

The following table shows how the PromptOnNoPassword DSN parameter, the DriverCompletion parameter of the SQLDriverConnect function, and whether the DSN or connection string contains a password interact to control whether the Vertica Connection dialog appears.

|

PromptOnNoPassword Setting |

DriverCompletion Value |

DSN or Connection String Contains Password? |

Vertica Connection Dialog Displays? |

Notes |

|

any value |

SQL_DRIVER_PROMPT |

any case |

Yes |

This DriverCompletion value forces the dialog to always appear, even if all required connection properties are supplied. |

|

any value |

SQL_DRIVER_NOPROMPT |

any case |

No |

This DriverCompletion value always prevents the dialog from appearing. |

|

any value |

SQL_DRIVER_COMPLETE |

Yes |

No |

Connection dialog displays if another required connection property is missing. |

|

true |

SQL_DRIVER_COMPLETE |

No |

Yes |

|

|

false (default) |

SQL_DRIVER_COMPLETE |

No |

No |

Connection dialog displays if another required connection property is missing. |

The following example code demonstrates using the PromptOnNoPassword DSN parameter along with a system DSN in C++:

wstring connectString = L "DSN=VerticaDSN;PromptOnNoPassword=1;";

retcode = SQLDriverConnect(

hdbc,

0,

(SQLWCHAR * ) connectString.c_str(),

connectString.length(),

OutConnStr,

255, &

amp; OutConnStrLen,

SQL_DRIVER_COMPLETE);

No password entry vs. empty passwords

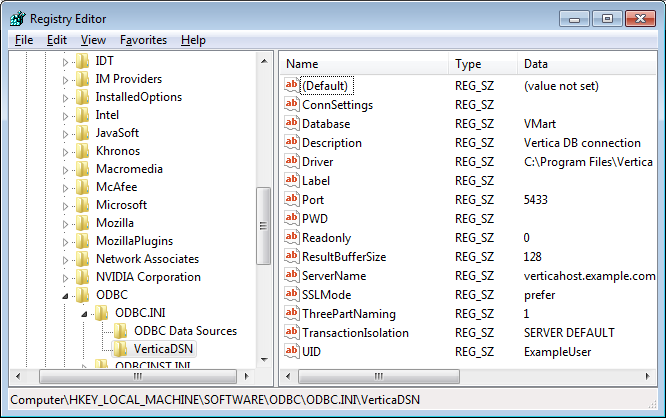

There is a difference between not having a password property in the connection string or DSN and having an empty password. The PromptOnNoPassword DSN parameter only has an effect if the connection string or DSN does not have a PWD property (which holds the user's password). If it does, even if it is empty, PromptOnNoPassword will not prompt the Windows ODBC driver to display the Vertica Connection Dialog.

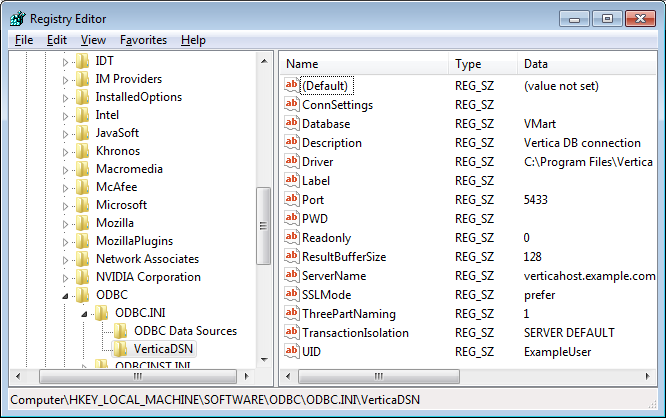

This difference can cause confusion if you are using a DSN to provide the properties for your connection. Once you enter a password for a DSN connection in the Windows ODBC Manager and save it, Windows adds a PWD property to the DSN definition in the registry. If you later delete the password, the PWD property remains in the DSN definition—value is just set to an empty string. The PWD property is created even if you just use the Test button on the ODBC Manager dialog to test the DSN and later clear it before saving the DSN.

Once the password has been set, the only way to remove the PWD property from the DSN definition is to delete it using the Windows Registry Editor:

-

On the Windows Start menu, click Run.

-

In the Run dialog, type regedit, then click OK.

-

In the Registry Editor window, click Edit > Find (or press Ctrl+F).

-

In the Find window, enter the name of the DSN whose PWD property you want to delete and click OK.

-

If find operation did not locate a folder under the ODBC.INI folder, click Edit > Find Next (or press F3) until the folder matching your DSN's name is highlighted.

-

Select the PWD entry and press Delete.

-

Click Yes to confirm deleting the value.

The DSN now does not have a PWD property and can trigger the connection dialog to appear when used along with PromptOnNoPassword=true and DriverConnect=SQL_DRIVER_COMPLETE.

1.10 - Setting the locale and encoding for ODBC sessions

Vertica provides the following methods to set the locale and encoding for an ODBC session:.

Vertica provides the following methods to set the locale and encoding for an ODBC session:

-

Specify the locale for all connections made using the DSN:

-

Set the Locale connection parameter in the connection string in SQLDriverConnect() function. For example:

SQLDriverConnect(conn, NULL, (SQLCHAR*)"DSN=Vertica;Locale=en_GB@collation=binary", SQL_NTS, szConnOut, sizeof(szConnOut), &iAvailable, SQL_DRIVER_NOPROMPT)

-

Use SQLSetConnectAttr() to set the encoding and locale. In general, you should always set the encoding with this function as opposed to, for example, setting it in the DSN.

-

Pass the SQL_ATTR_VERTICA_LOCALE constant and the ICU string as the attribute value. For example:

=> SQLSetConnectAttr(hdlDbc, SQL_ATTR_VERTICA_LOCALE, (SQLCHAR*)newLocale,

SQL_NTS);

-

Pass the SQL_ATTR_AP_WCHAR_TYPE constant and the encoding as the attribute value. For example:

=> rc = SQLSetConnectAttr (hdbc, SQL_ATTR_APP_WCHAR_TYPE, (void *)SQL_DD_CP_UTF16, SQL_IS_INTEGER);

Notes

-

Having the client system use a non-Unicode locale (such as setting LANG=C on Linux platforms) and using a Unicode locale for the connection to Vertica can result in errors such as "(10170) String data right truncation on data from data source." If data received from Vertica isn't in UTF-8 format. The driver allocates string memory based on the system's locale setting, and non-UTF-8 data can trigger an overrun. You can avoid these errors by always using a Unicode locale on the client system.

If you specify a locale either in the connection string or in the DSN, the call to the connection function returns SQL_SUCCESS_WITH_INFO on a successful connection, with messages about the state of the locale.

-

ODBC applications can be in either ANSI or Unicode mode:

-

If Unicode, the encoding used by ODBC is UCS-2.

-

If ANSI, the data must be in single-byte ASCII, which is compatible with UTF-8 on the database server.

The ODBC driver converts UCS-2 to UTF-8 when passing to the Vertica server and converts data sent by the Vertica server from UTF-8 to UCS-2.

-

If the end-user application is not already in UCS-2, the application is responsible for converting the input data to UCS-2, or unexpected results could occur. For example:

-

On non-UCS-2 data passed to ODBC APIs, when it is interpreted as UCS-2, it could result in an invalid UCS-2 symbol being passed to the APIs, resulting in errors.

-

Or the symbol provided in the alternate encoding could be a valid UCS-2 symbol; in this case, incorrect data is inserted into the database.

ODBC applications should set the correct server session locale using SQLSetConnectAttr (if different from database-wide setting) in order to set the proper collation and string functions behavior on server.

The following example code demonstrates setting the locale using both the connection string and with the SQLSetConnectAttr() function.

// Standard i/o library

#include <stdio.h>

#include <stdlib.h>

// Only needed for Windows clients

// #include <windows.h>

// SQL include files that define data types and ODBC API

// functions

#include <sql.h>

#include <sqlext.h>

#include <sqltypes.h>

// Vertica-specific definitions. This include file is located as

// /opt/vertica/include on database hosts.

#include <verticaodbc.h>

int main()

{

SQLRETURN ret; // Stores return value from ODBC API calls

SQLHENV hdlEnv; // Handle for the SQL environment object

// Allocate an a SQL environment object

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate a handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated an environment handle.\n");

}

// Set the ODBC version we are going to use to 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not set application version to ODBC 3.\n");

exit(EXIT_FAILURE);

} else {

printf("Set application version to ODBC 3.\n");

}

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate database handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated Database handle.\n");

}

// Connect to the database using SQLDriverConnect

printf("Connecting to database.\n");

// Set the locale to English in Great Britain.

const char *connStr = "DSN=ExampleDB;locale=en_GB;"

"UID=dbadmin;PWD=password123";

ret = SQLDriverConnect(hdlDbc, NULL, (SQLCHAR*)connStr, SQL_NTS,

NULL, 0, NULL, SQL_DRIVER_NOPROMPT );

if(!SQL_SUCCEEDED(ret)) {

printf("Could not connect to database.\n");

exit(EXIT_FAILURE);

} else {

printf("Connected to database.\n");

}

// Get the Locale

char locale[256];

SQLGetConnectAttr(hdlDbc, SQL_ATTR_VERTICA_LOCALE, locale, sizeof(locale),

0);

printf("Locale is set to: %s\n", locale);

// Set the locale to a new value

const char* newLocale = "en_GB";

SQLSetConnectAttr(hdlDbc, SQL_ATTR_VERTICA_LOCALE, (SQLCHAR*)newLocale,

SQL_NTS);

// Get the Locale again

SQLGetConnectAttr(hdlDbc, SQL_ATTR_VERTICA_LOCALE, locale, sizeof(locale),

0);

printf("Locale is now set to: %s\n", locale);

// Set the encoding

SQLSetConnectAttr (hdbc, SQL_ATTR_APP_WCHAR_TYPE, (void *)SQL_DD_CP_UTF16,

SQL_IS_INTEGER);

// When done, free all of the handles to close them

// in an orderly fashion.

printf("Disconnecting and freeing handles.\n");

ret = SQLDisconnect( hdlDbc );

if(!SQL_SUCCEEDED(ret)) {

printf("Error disconnecting from database. Transaction still open?\n");

exit(EXIT_FAILURE);

}

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_SUCCESS);

}

1.11 - AUTOCOMMIT and ODBC transactions

The AUTOCOMMIT connection attribute controls whether INSERT, ALTER, COPY and other data-manipulation statements are automatically committed after they complete.

The AUTOCOMMIT connection attribute controls whether INSERT, ALTER, COPY and other data-manipulation statements are automatically committed after they complete. By default, AUTOCOMMIT is enabled—all statements are committed after they execute. This is often not the best setting to use, since it is less efficient. Also, you often want to control whether a set of statements are committed as a whole, rather than have each individual statement committed. For example, you may only want to commit a series of inserts if all of the inserts succeed. With AUTOCOMMIT disabled, you can roll back the transaction if one of the statements fail.

If AUTOCOMMIT is on, the results of statements are committed immediately after they are executed. You cannot roll back a statement executed in AUTOCOMMIT mode.

For example, when AUTOCOMMIT is on, the following single INSERT statement is automatically committed:

ret = SQLExecDirect(hdlStmt, (SQLCHAR*)"INSERT INTO customers VALUES(500,"

"'Smith, Sam', '123-456-789');", SQL_NTS);

If AUTOCOMMIT is off, you need to manually commit the transaction after executing a statement. For example:

ret = SQLExecDirect(hdlStmt, (SQLCHAR*)"INSERT INTO customers VALUES(500,"

"'Smith, Sam', '123-456-789');", SQL_NTS);

// Other inserts and data manipulations

// Commit the statements(s)

ret = SQLEndTran(SQL_HANDLE_DBC, hdlDbc, SQL_COMMIT);

The inserted row is only committed when you call SQLEndTran(). You can roll back the INSERT and other statements at any point before committing the transaction.

Note

Prepared statements cache the AUTOCOMMIT setting when you create them using

SQLPrepare(). Later changing the connection's AUTOCOMMIT setting has no effect on the AUTOCOMMIT settings of previously created prepared statements. See

Using prepared statements for details.

The following example demonstrates turning off AUTOCOMMIT, executing an insert, then manually committing the transaction.

// Some standard headers

#include <stdio.h>

#include <stdlib.h>

// Only needed for Windows clients

// #include <windows.h>

// Standard ODBC headers

#include <sql.h>

#include <sqltypes.h>

#include <sqlext.h>

int main()

{

// Set up the ODBC environment

SQLRETURN ret;

SQLHENV hdlEnv;

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate a handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated an environment handle.\n");

}

// Tell ODBC that the application uses ODBC 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not set application version to ODBC3.\n");

exit(EXIT_FAILURE);

} else {

printf("Set application to ODBC 3.\n");

}

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate database handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated Database handle.\n");

}

// Connect to the database

printf("Connecting to database.\n");

const char *dsnName = "ExampleDB";

const char* userID = "dbadmin";

const char* passwd = "password123";

ret = SQLConnect(hdlDbc, (SQLCHAR*)dsnName,

SQL_NTS,(SQLCHAR*)userID,SQL_NTS,

(SQLCHAR*)passwd, SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not connect to database.\n");

exit(EXIT_FAILURE);

} else {

printf("Connected to database.\n");

}

// Get the AUTOCOMMIT state

SQLINTEGER autoCommitState;

SQLGetConnectAttr(hdlDbc, SQL_ATTR_AUTOCOMMIT, &autoCommitState, 0, NULL);

printf("Autocommit is set to: %d\n", autoCommitState);

// Disable AUTOCOMMIT

printf("Disabling autocommit.\n");

ret = SQLSetConnectAttr(hdlDbc, SQL_ATTR_AUTOCOMMIT, SQL_AUTOCOMMIT_OFF,

SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not disable autocommit.\n");

exit(EXIT_FAILURE);

}

// Get the AUTOCOMMIT state again

SQLGetConnectAttr(hdlDbc, SQL_ATTR_AUTOCOMMIT, &autoCommitState, 0, NULL);

printf("Autocommit is set to: %d\n", autoCommitState);

// Set up a statement handle

SQLHSTMT hdlStmt;

SQLAllocHandle(SQL_HANDLE_STMT, hdlDbc, &hdlStmt);

// Create a table to hold the data

SQLExecDirect(hdlStmt, (SQLCHAR*)"DROP TABLE IF EXISTS customers",

SQL_NTS);

SQLExecDirect(hdlStmt, (SQLCHAR*)"CREATE TABLE customers "

"(CustID int, CustName varchar(100), Phone_Number char(15));",

SQL_NTS);

// Insert a single row.

ret = SQLExecDirect(hdlStmt, (SQLCHAR*)"INSERT INTO customers VALUES(500,"

"'Smith, Sam', '123-456-789');", SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not perform single insert.\n");

} else {

printf("Performed single insert.\n");

}

// Need to commit the transaction before closing, since autocommit is

// disabled. Otherwise SQLDisconnect returns an error.

printf("Committing transaction.\n");

ret = SQLEndTran(SQL_HANDLE_DBC, hdlDbc, SQL_COMMIT);

if(!SQL_SUCCEEDED(ret)) {

printf("Error committing transaction.\n");

exit(EXIT_FAILURE);

}

// Clean up

printf("Free handles.\n");

ret = SQLDisconnect(hdlDbc);

if(!SQL_SUCCEEDED(ret)) {

printf("Error disconnecting from database. Transaction still open?\n");

exit(EXIT_FAILURE);

}

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_SUCCESS);

}

Running the above code results in the following output:

Allocated an environment handle.

Set application to ODBC 3.

Allocated Database handle.

Connecting to database.

Connected to database.

Autocommit is set to: 1

Disabling autocommit.

Autocommit is set to: 0

Performed single insert.

Committing transaction.

Free handles.

1.12 - Retrieving data

To retrieve data through ODBC, you execute a query that returns a result set (SELECT, for example), then retrieve the results using one of two methods:.

To retrieve data through ODBC, you execute a query that returns a result set (SELECT, for example), then retrieve the results using one of two methods:

-

Use the SQLFetch() function to retrieve a row of the result set, then access column values in the row by calling SQLGetData().

-

Use the SQLBindColumn() function to bind a variable or array to a column in the result set, then call SQLExtendedFetch() or SQLFetchScroll() to read a row of the result set and insert its values into the variable or array.

In both methods you loop through the result set until you either reach the end (signaled by the SQL_NO_DATA return status) or encounter an error.

Note

Vertica supports one cursor per connection. Attempting to use more than one cursor per connection will result in an error. For example, you receive an error if you execute a statement while another statement has a result set open.

The following code example demonstrates retrieving data from Vertica by:

-

Connecting to the database.

-

Executing a SELECT statement that returns the IDs and names of all tables.

-

Binds two variables to the two columns in the result set.

-

Loops through the result set, printing the ids and name values.

// Demonstrate running a query and getting results by querying the tables

// system table for a list of all tables in the current schema.

// Some standard headers

#include <stdlib.h>

#include <sstream>

#include <iostream>

#include <assert.h>

// Standard ODBC headers

#include <sql.h>

#include <sqltypes.h>

#include <sqlext.h>

// Use std namespace to make output easier

using namespace std;

// Helper function to print SQL error messages.

template <typename HandleT>

void reportError(int handleTypeEnum, HandleT hdl)

{

// Get the status records.

SQLSMALLINT i, MsgLen;

SQLRETURN ret2;

SQLCHAR SqlState[6], Msg[SQL_MAX_MESSAGE_LENGTH];

SQLINTEGER NativeError;

i = 1;

cout << endl;

while ((ret2 = SQLGetDiagRec(handleTypeEnum, hdl, i, SqlState, &NativeError,

Msg, sizeof(Msg), &MsgLen)) != SQL_NO_DATA) {

cout << "error record #" << i++ << endl;

cout << "sqlstate: " << SqlState << endl;

cout << "detailed msg: " << Msg << endl;

cout << "native error code: " << NativeError << endl;

}

}

typedef struct {

SQLHENV hdlEnv;

SQLHDBC hdlDbc;

} DBConnection;

void connect(DBConnection *pConnInfo)

{

// Set up the ODBC environment

SQLRETURN ret;

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &pConnInfo->hdlEnv);

assert(SQL_SUCCEEDED(ret));

// Tell ODBC that the application uses ODBC 3.

ret = SQLSetEnvAttr(pConnInfo->hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER)SQL_OV_ODBC3, SQL_IS_UINTEGER);

assert(SQL_SUCCEEDED(ret));

// Allocate a database handle.

ret = SQLAllocHandle(SQL_HANDLE_DBC, pConnInfo->hdlEnv, &pConnInfo->hdlDbc);

assert(SQL_SUCCEEDED(ret));

// Connect to the database

cout << "Connecting to database." << endl;

const char* dsnName = "ExampleDB";

const char* userID = "dbadmin";

const char* passwd = "password123";

ret = SQLConnect(pConnInfo->hdlDbc, (SQLCHAR*)dsnName,

SQL_NTS, (SQLCHAR*)userID, SQL_NTS,

(SQLCHAR*)passwd, SQL_NTS);

if (!SQL_SUCCEEDED(ret)) {

cout << "Could not connect to database" << endl;

reportError<SQLHDBC>(SQL_HANDLE_DBC, pConnInfo->hdlDbc);

exit(EXIT_FAILURE);

}

else {

cout << "Connected to database." << endl;

}

}

void disconnect(DBConnection *pConnInfo)

{

SQLRETURN ret;

// Clean up by shutting down the connection

cout << "Free handles." << endl;

ret = SQLDisconnect(pConnInfo->hdlDbc);

if (!SQL_SUCCEEDED(ret)) {

cout << "Error disconnecting. Transaction still open?" << endl;

exit(EXIT_FAILURE);

}

SQLFreeHandle(SQL_HANDLE_DBC, pConnInfo->hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, pConnInfo->hdlEnv);

}

void executeQuery(SQLHDBC hdlDbc, SQLCHAR* pQuery)

{

SQLRETURN ret;

// Set up a statement handle

SQLHSTMT hdlStmt;

SQLAllocHandle(SQL_HANDLE_STMT, hdlDbc, &hdlStmt);

assert(SQL_SUCCEEDED(ret));

// Execute a query to get the names and IDs of all tables in the schema

// search p[ath (usually public).

ret = SQLExecDirect(hdlStmt, pQuery, SQL_NTS);

if (!SQL_SUCCEEDED(ret)) {

// Report error an go no further if statement failed.

cout << "Error executing statement." << endl;

reportError<SQLHDBC>(SQL_HANDLE_STMT, hdlStmt);

exit(EXIT_FAILURE);

}

else {

// Query succeeded, so bind two variables to the two colums in the

// result set,

cout << "Fetching results..." << endl;

SQLBIGINT table_id; // Holds the ID of the table.

SQLTCHAR table_name[256]; // buffer to hold name of table

ret = SQLBindCol(hdlStmt, 1, SQL_C_SBIGINT, (SQLPOINTER)&table_id,

sizeof(table_id), NULL);

ret = SQLBindCol(hdlStmt, 2, SQL_C_TCHAR, (SQLPOINTER)table_name,

sizeof(table_name), NULL);

// Loop through the results,

while (SQL_SUCCEEDED(ret = SQLFetchScroll(hdlStmt, SQL_FETCH_NEXT, 1))) {

// Print the bound variables, which now contain the values from the

// fetched row.

cout << table_id << " | " << table_name << endl;

}

// See if loop exited for reasons other than running out of data

if (ret != SQL_NO_DATA) {

// Exited for a reason other than no more data... report the error.

reportError<SQLHDBC>(SQL_HANDLE_STMT, hdlStmt);

}

}

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

}

int main()

{

DBConnection conn;

connect(&conn);

executeQuery(conn.hdlDbc,

(SQLCHAR*)"SELECT table_id, table_name FROM tables ORDER BY table_name");

executeQuery(conn.hdlDbc,

(SQLCHAR*)"SELECT table_id, table_name FROM tables ORDER BY table_id");

disconnect(&conn);

exit(EXIT_SUCCESS);

}

Running the example code in the vmart database produces output similar to this:

Connecting to database.

Connected to database.

Fetching results...

45035996273970908 | call_center_dimension

45035996273970836 | customer_dimension

45035996273972958 | customers

45035996273970848 | date_dimension

45035996273970856 | employee_dimension

45035996273970868 | inventory_fact

45035996273970904 | online_page_dimension

45035996273970912 | online_sales_fact

45035996273970840 | product_dimension

45035996273970844 | promotion_dimension

45035996273970860 | shipping_dimension

45035996273970876 | store_dimension

45035996273970894 | store_orders_fact

45035996273970880 | store_sales_fact

45035996273972806 | t

45035996273970852 | vendor_dimension

45035996273970864 | warehouse_dimension

Fetching results...

45035996273970836 | customer_dimension

45035996273970840 | product_dimension

45035996273970844 | promotion_dimension

45035996273970848 | date_dimension

45035996273970852 | vendor_dimension

45035996273970856 | employee_dimension

45035996273970860 | shipping_dimension

45035996273970864 | warehouse_dimension

45035996273970868 | inventory_fact

45035996273970876 | store_dimension

45035996273970880 | store_sales_fact

45035996273970894 | store_orders_fact

45035996273970904 | online_page_dimension

45035996273970908 | call_center_dimension

45035996273970912 | online_sales_fact

45035996273972806 | t

45035996273972958 | customers

Free handles.

1.13 - Loading data

A primary task for many client applications is loading data into the Vertica database.

A primary task for many client applications is loading data into the Vertica database. There are several different ways to insert data using ODBC, which are covered by the topics in this section.

1.13.1 - Using a single row insert

The easiest way to load data into Vertica is to run an INSERT SQL statement using the SQLExecuteDirect function.

The easiest way to load data into Vertica is to run an INSERT SQL statement using the SQLExecuteDirect function. However this method is limited to inserting a single row of data.

ret = SQLExecDirect(hstmt, (SQLTCHAR*)"INSERT into Customers values"

"(1,'abcda','efgh','1')", SQL_NTS);

1.13.2 - Using prepared statements

Vertica supports using server-side prepared statements with both ODBC and JDBC.

Vertica supports using server-side prepared statements with both ODBC and JDBC. Prepared statements let you define a statement once, and then run it many times with different parameters. The statement you want to execute contains placeholders instead of parameters. When you execute the statement, you supply values for each placeholder.

Placeholders are represented by question marks (?) as in the following example query:

SELECT * FROM public.inventory_fact WHERE product_key = ?

Server-side prepared statements are useful for:

-

Optimizing queries. Vertica only needs to parse the statement once.

-

Preventing SQL injection attacks. A SQL injection attack occurs when user input is either incorrectly filtered for string literal escape characters embedded in SQL statements or user input is not strongly typed and thereby unexpectedly run. Since a prepared statement is parsed separately from the input data, there is no chance the data can be accidentally executed by the database.

-

Binding direct variables to return columns. By pointing to data structures, the code doesn't have to perform extra transformations.

The following example demonstrates a using a prepared statement for a single insert.

// Some standard headers

#include <stdio.h>

#include <stdlib.h>

// Only needed for Windows clients

// #include <windows.h>

// Standard ODBC headers

#include <sql.h>

#include <sqltypes.h>

#include <sqlext.h>

// Some constants for the size of the data to be inserted.

#define CUST_NAME_LEN 50

#define PHONE_NUM_LEN 15

#define NUM_ENTRIES 4

int main()

{

// Set up the ODBC environment

SQLRETURN ret;

SQLHENV hdlEnv;

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate a handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated an environment handle.\n");

}

// Tell ODBC that the application uses ODBC 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not set application version to ODBC3.\n");

exit(EXIT_FAILURE);

} else {

printf("Set application to ODBC 3.\n");

}

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

// Connect to the database

printf("Connecting to database.\n");

const char *dsnName = "ExampleDB";

const char* userID = "dbadmin";

const char* passwd = "password123";

ret = SQLConnect(hdlDbc, (SQLCHAR*)dsnName,

SQL_NTS,(SQLCHAR*)userID,SQL_NTS,

(SQLCHAR*)passwd, SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not connect to database.\n");

exit(EXIT_FAILURE);

} else {

printf("Connected to database.\n");

}

// Disable AUTOCOMMIT

printf("Disabling autocommit.\n");

ret = SQLSetConnectAttr(hdlDbc, SQL_ATTR_AUTOCOMMIT, SQL_AUTOCOMMIT_OFF,

SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not disable autocommit.\n");

exit(EXIT_FAILURE);

}

// Set up a statement handle

SQLHSTMT hdlStmt;

SQLAllocHandle(SQL_HANDLE_STMT, hdlDbc, &hdlStmt);

SQLExecDirect(hdlStmt, (SQLCHAR*)"DROP TABLE IF EXISTS customers",

SQL_NTS);

SQLExecDirect(hdlStmt, (SQLCHAR*)"CREATE TABLE customers "

"(CustID int, CustName varchar(100), Phone_Number char(15));",

SQL_NTS);

// Set up a bunch of variables to be bound to the statement

// parameters.

// Create the prepared statement. This will insert data into the

// table we created above.

printf("Creating prepared statement\n");

ret = SQLPrepare (hdlStmt, (SQLTCHAR*)"INSERT INTO customers (CustID, "

"CustName, Phone_Number) VALUES(?,?,?)", SQL_NTS) ;

if(!SQL_SUCCEEDED(ret)) {

printf("Could not create prepared statement\n");

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_FAILURE);

} else {

printf("Created prepared statement.\n");

}

SQLINTEGER custID = 1234;

SQLCHAR custName[100] = "Fein, Fredrick";

SQLVARCHAR phoneNum[15] = "555-123-6789";

SQLLEN strFieldLen = SQL_NTS;

SQLLEN custIDLen = 0;

// Bind the data arrays to the parameters in the prepared SQL

// statement

ret = SQLBindParameter(hdlStmt, 1, SQL_PARAM_INPUT, SQL_C_LONG, SQL_INTEGER,

0, 0, &custID, 0 , &custIDLen);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind custID array\n");

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_FAILURE);

} else {

printf("Bound custID to prepared statement\n");

}

// Bind CustNames

SQLBindParameter(hdlStmt, 2, SQL_PARAM_INPUT, SQL_C_CHAR, SQL_VARCHAR,

50, 0, (SQLPOINTER)custName, 0, &strFieldLen);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind custNames\n");

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_FAILURE);

} else {

printf("Bound custName to prepared statement\n");

}

// Bind phoneNums

SQLBindParameter(hdlStmt, 3, SQL_PARAM_INPUT, SQL_C_CHAR, SQL_CHAR,

15, 0, (SQLPOINTER)phoneNum, 0, &strFieldLen);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind phoneNums\n");

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_FAILURE);

} else {

printf("Bound phoneNum to prepared statement\n");

}

// Execute the prepared statement.

printf("Running prepared statement...");

ret = SQLExecute(hdlStmt);

if(!SQL_SUCCEEDED(ret)) {

printf("not successful!\n");

} else {

printf("successful.\n");

}

// Done with batches, commit the transaction

printf("Committing transaction\n");

ret = SQLEndTran(SQL_HANDLE_DBC, hdlDbc, SQL_COMMIT);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not commit transaction\n");

} else {

printf("Committed transaction\n");

}

// Clean up

printf("Free handles.\n");

ret = SQLDisconnect( hdlDbc );

if(!SQL_SUCCEEDED(ret)) {

printf("Error disconnecting. Transaction still open?\n");

exit(EXIT_FAILURE);

}

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_SUCCESS);

}

1.13.3 - Using batch inserts

You use batch inserts to insert chunks of data into the database.

You use batch inserts to insert chunks of data into the database. By breaking the data into batches, you can monitor the progress of the load by receiving information about any rejected rows after each batch is loaded. To perform a batch load through ODBC, you typically use a prepared statement with the parameters bound to arrays that contain the data to be loaded. For each batch, you load a new set of data into the arrays then execute the prepared statement.

When you perform a batch load, Vertica uses a COPY statement to load the data. Each additional batch you load uses the same COPY statement. The statement remains open until you end the transaction, close the cursor for the statement, or execute a non-INSERT statement.

Using a single COPY statement for multiple batches improves batch loading efficiency by:

Note

If the database connection has AUTOCOMMIT enabled, then the transaction is automatically committed after each batch insert statement which closes the COPY statement. Leaving AUTOCOMMIT enabled makes your batch load much less efficient, and can cause added overhead in your database as all of the smaller loads are consolidated.

Even though Vertica uses a single COPY statement to insert multiple batches within a transaction, you can locate which (if any) rows were rejected due to invalid row formats or data type issues after each batch is loaded. See Tracking load status (ODBC) for details.

Note

While you can find rejected rows during the batch load transaction, other types of errors (such as running out of disk space or a node shutdown that makes the database unsafe) are only reported when the COPY statement ends.

Since the batch loads share a COPY statement, errors in one batch can cause earlier batches in the same transaction to be rolled back.

Batch insert steps

The steps your application needs to take in order to perform an ODBC Batch Insert are:

-

Connect to the database.

-

Disable autocommit for the connection.

-

Create a prepared statement that inserts the data you want to load.

-

Bind the parameters of the prepared statement to arrays that will contain the data you want to load.

-

Populate the arrays with the data for your batches.

-

Execute the prepared statement.

-

Optionally, check the results of the batch load to find rejected rows.

-

Repeat the previous three steps until all of the data you want to load is loaded.

-

Commit the transaction.

-

Optionally, check the results of the entire batch transaction.

The following example code demonstrates a simplified version of the above steps.

// Some standard headers

#include <stdio.h>

#include <stdlib.h>

// Only needed for Windows clients

// #include <windows.h>

// Standard ODBC headers

#include <sql.h>

#include <sqltypes.h>

#include <sqlext.h>

int main()

{

// Number of data rows to insert

const int NUM_ENTRIES = 4;

// Set up the ODBC environment

SQLRETURN ret;

SQLHENV hdlEnv;

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate a handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated an environment handle.\n");

}

// Tell ODBC that the application uses ODBC 3.

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not set application version to ODBC3.\n");

exit(EXIT_FAILURE);

} else {

printf("Set application to ODBC 3.\n");

}

// Allocate a database handle.

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate database handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated Database handle.\n");

}

// Connect to the database

printf("Connecting to database.\n");

const char *dsnName = "ExampleDB";

const char* userID = "dbadmin";

const char* passwd = "password123";

ret = SQLConnect(hdlDbc, (SQLCHAR*)dsnName,

SQL_NTS,(SQLCHAR*)userID,SQL_NTS,

(SQLCHAR*)passwd, SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not connect to database.\n");

exit(EXIT_FAILURE);

} else {

printf("Connected to database.\n");

}

// Disable AUTOCOMMIT

printf("Disabling autocommit.\n");

ret = SQLSetConnectAttr(hdlDbc, SQL_ATTR_AUTOCOMMIT, SQL_AUTOCOMMIT_OFF,

SQL_NTS);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not disable autocommit.\n");

exit(EXIT_FAILURE);

}

// Set up a statement handle

SQLHSTMT hdlStmt;

SQLAllocHandle(SQL_HANDLE_STMT, hdlDbc, &hdlStmt);

// Create a table to hold the data

SQLExecDirect(hdlStmt, (SQLCHAR*)"DROP TABLE IF EXISTS customers",

SQL_NTS);

SQLExecDirect(hdlStmt, (SQLCHAR*)"CREATE TABLE customers "

"(CustID int, CustName varchar(100), Phone_Number char(15));",

SQL_NTS);

// Create the prepared statement. This will insert data into the

// table we created above.

printf("Creating prepared statement\n");

ret = SQLPrepare (hdlStmt, (SQLTCHAR*)"INSERT INTO customers (CustID, "

"CustName, Phone_Number) VALUES(?,?,?)", SQL_NTS) ;

if(!SQL_SUCCEEDED(ret)) {

printf("Could not create prepared statement\n");

exit(EXIT_FAILURE);

} else {

printf("Created prepared statement.\n");

}

// This is the data to be inserted into the database.

SQLCHAR custNames[][50] = { "Allen, Anna", "Brown, Bill", "Chu, Cindy",

"Dodd, Don" };

SQLINTEGER custIDs[] = { 100, 101, 102, 103};

SQLCHAR phoneNums[][15] = {"1-617-555-1234", "1-781-555-1212",

"1-508-555-4321", "1-617-555-4444"};

// Bind the data arrays to the parameters in the prepared SQL

// statement. First is the custID.

ret = SQLBindParameter(hdlStmt, 1, SQL_PARAM_INPUT, SQL_C_LONG, SQL_INTEGER,

0, 0, (SQLPOINTER)custIDs, sizeof(SQLINTEGER) , NULL);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind custID array\n");

exit(EXIT_FAILURE);

} else {

printf("Bound CustIDs array to prepared statement\n");

}

// Bind CustNames

ret = SQLBindParameter(hdlStmt, 2, SQL_PARAM_INPUT, SQL_C_CHAR, SQL_VARCHAR,

50, 0, (SQLPOINTER)custNames, 50, NULL);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind custNames\n");

exit(EXIT_FAILURE);

} else {

printf("Bound CustNames array to prepared statement\n");

}

// Bind phoneNums

ret = SQLBindParameter(hdlStmt, 3, SQL_PARAM_INPUT, SQL_C_CHAR, SQL_CHAR,

15, 0, (SQLPOINTER)phoneNums, 15, NULL);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind phoneNums\n");

exit(EXIT_FAILURE);

} else {

printf("Bound phoneNums array to prepared statement\n");

}

// Tell the ODBC driver how many rows we have in the

// array.

ret = SQLSetStmtAttr( hdlStmt, SQL_ATTR_PARAMSET_SIZE,

(SQLPOINTER)NUM_ENTRIES, 0 );

if(!SQL_SUCCEEDED(ret)) {

printf("Could not bind set parameter size\n");

exit(EXIT_FAILURE);

} else {

printf("Bound phoneNums array to prepared statement\n");

}

// Add multiple batches to the database. This just adds the same

// batch of data four times for simplicity's sake. Each call adds

// the 4 rows into the database.

for (int batchLoop=1; batchLoop<=5; batchLoop++) {

// Execute the prepared statement, loading all of the data

// in the arrays.

printf("Adding Batch #%d...", batchLoop);

ret = SQLExecute(hdlStmt);

if(!SQL_SUCCEEDED(ret)) {

printf("not successful!\n");

} else {

printf("successful.\n");

}

}

// Done with batches, commit the transaction

printf("Committing transaction\n");

ret = SQLEndTran(SQL_HANDLE_DBC, hdlDbc, SQL_COMMIT);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not commit transaction\n");

} else {

printf("Committed transaction\n");

}

// Clean up

printf("Free handles.\n");

ret = SQLDisconnect( hdlDbc );

if(!SQL_SUCCEEDED(ret)) {

printf("Error disconnecting. Transaction still open?\n");

exit(EXIT_FAILURE);

}

SQLFreeHandle(SQL_HANDLE_STMT, hdlStmt);

SQLFreeHandle(SQL_HANDLE_DBC, hdlDbc);

SQLFreeHandle(SQL_HANDLE_ENV, hdlEnv);

exit(EXIT_SUCCESS);

}

The result of running the above code is shown below.

Allocated an environment handle.

Set application to ODBC 3.

Allocated Database handle.

Connecting to database.

Connected to database.

Creating prepared statement

Created prepared statement.

Bound CustIDs array to prepared statement

Bound CustNames array to prepared statement

Bound phoneNums array to prepared statement

Adding Batch #1...successful.

Adding Batch #2...successful.

Adding Batch #3...successful.

Adding Batch #4...successful.

Adding Batch #5...successful.

Committing transaction

Committed transaction

Free handles.

The resulting table looks like this:

=> SELECT * FROM customers;

CustID | CustName | Phone_Number

--------+-------------+-----------------

100 | Allen, Anna | 1-617-555-1234

101 | Brown, Bill | 1-781-555-1212

102 | Chu, Cindy | 1-508-555-4321

103 | Dodd, Don | 1-617-555-4444

100 | Allen, Anna | 1-617-555-1234

101 | Brown, Bill | 1-781-555-1212

102 | Chu, Cindy | 1-508-555-4321

103 | Dodd, Don | 1-617-555-4444

100 | Allen, Anna | 1-617-555-1234

101 | Brown, Bill | 1-781-555-1212

102 | Chu, Cindy | 1-508-555-4321

103 | Dodd, Don | 1-617-555-4444

100 | Allen, Anna | 1-617-555-1234

101 | Brown, Bill | 1-781-555-1212

102 | Chu, Cindy | 1-508-555-4321

103 | Dodd, Don | 1-617-555-4444

100 | Allen, Anna | 1-617-555-1234

101 | Brown, Bill | 1-781-555-1212

102 | Chu, Cindy | 1-508-555-4321

103 | Dodd, Don | 1-617-555-4444

(20 rows)

Note

An input parameter bound with the SQL_C_NUMERIC data type uses the default numeric precision (37) and the default scale (0) instead of the precision and scale set by the SQL_NUMERIC_STRUCT input value. This behavior adheres to the ODBC standard. If you do not want to use the default precision and scale, use SQLSetDescField() or SQLSetDescRec() to change them in the statement's attributes.

1.13.3.1 - Tracking load status (ODBC)

After loading a batch of data, your client application can get the number of rows that were processed and find out whether each row was accepted or rejected.

After loading a batch of data, your client application can get the number of rows that were processed and find out whether each row was accepted or rejected.

Finding the number of accepted rows

To get the number of rows processed by a batch, you add an attribute named SQL_ATTR_PARAMS_PROCESSED_PTR to the statement object that points to a variable to receive the number rows:

SQLULEN rowsProcessed;

SQLSetStmtAttr(hdlStmt, SQL_ATTR_PARAMS_PROCESSED_PTR, &rowsProcessed, 0);

When your application calls SQLExecute() to insert the batch, the Vertica ODBC driver saves the number of rows that it processed (which is not necessarily the number of rows that were successfully inserted) in the variable you specified in the SQL_ATTR_PARAMS_PROCESSED_PTR statement attribute.

Finding the accepted and rejected rows

Your application can also set a statement attribute named SQL_ATTR_PARAM_STATUS_PTR that points to an array where the ODBC driver can store the result of inserting each row:

SQLUSMALLINT rowResults[ NUM_ENTRIES ];

SQLSetStmtAttr(hdlStmt, SQL_ATTR_PARAM_STATUS_PTR, rowResults, 0);

This array must be at least as large as the number of rows being inserted in each batch.

When your application calls SQLExecute to insert a batch, the ODBC driver populates the array with values indicating whether each row was successfully inserted (SQL_PARAM_SUCCESS or SQL_PARAM_SUCCESS_WITH_INFO) or encountered an error (SQL_PARAM_ERROR).

The following example expands on the example shown in Using batch inserts to include reporting the number of rows processed and the status of each row inserted.

// Some standard headers

#include <stdio.h>

#include <stdlib.h>

// Only needed for Windows clients

// #include <windows.h>

// Standard ODBC headers

#include <sql.h>

#include <sqltypes.h>

#include <sqlext.h>

// Helper function to print SQL error messages.

template <typename HandleT>

void reportError(int handleTypeEnum, HandleT hdl)

{

// Get the status records.

SQLSMALLINT i, MsgLen;

SQLRETURN ret2;

SQLCHAR SqlState[6], Msg[SQL_MAX_MESSAGE_LENGTH];

SQLINTEGER NativeError;

i = 1;

printf("\n");

while ((ret2 = SQLGetDiagRec(handleTypeEnum, hdl, i, SqlState, &NativeError,

Msg, sizeof(Msg), &MsgLen)) != SQL_NO_DATA) {

printf("error record %d\n", i);

printf("sqlstate: %s\n", SqlState);

printf("detailed msg: %s\n", Msg);

printf("native error code: %d\n\n", NativeError);

i++;

}

}

int main()

{

// Number of data rows to insert

const int NUM_ENTRIES = 4;

SQLRETURN ret;

SQLHENV hdlEnv;

ret = SQLAllocHandle(SQL_HANDLE_ENV, SQL_NULL_HANDLE, &hdlEnv);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate a handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated an environment handle.\n");

}

ret = SQLSetEnvAttr(hdlEnv, SQL_ATTR_ODBC_VERSION,

(SQLPOINTER) SQL_OV_ODBC3, SQL_IS_UINTEGER);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not set application version to ODBC3.\n");

exit(EXIT_FAILURE);

} else {

printf("Set application to ODBC 3.\n");

}

SQLHDBC hdlDbc;

ret = SQLAllocHandle(SQL_HANDLE_DBC, hdlEnv, &hdlDbc);

if(!SQL_SUCCEEDED(ret)) {

printf("Could not allocate database handle.\n");

exit(EXIT_FAILURE);

} else {

printf("Allocated Database handle.\n");

}

// Connect to the database

printf("Connecting to database.\n");

const char *dsnName = "ExampleDB";