This is the multi-page printable view of this section.

Click here to print.

Return to the regular view of this page.

Containerized Vertica

Vertica leverages container technology to meet the needs of modern application development and operations workflows that must deliver software quickly and efficiently across a variety of infrastructures.

Vertica Eon Mode leverages container technology to meet the needs of modern application development and operations workflows that must deliver software quickly and efficiently across a variety of infrastructures. Containerized Vertica supports Kubernetes with automation tools to help maintain the state of your environment with minimal disruptions and manual intervention.

Containerized Vertica provides the following benefits:

-

Performance: Eon Mode separates compute from storage, which provides the optimal architecture for stateful, containerized applications. Eon Mode subclusters can target specific workloads and scale elastically according to the current computational needs.

-

High availability: Vertica containers provide a consistent, repeatable environment that you can deploy quickly. If a database host or service fails, you can easily replace the resource.

-

Resource utilization: A container is a runtime environment that packages an application and its dependencies in an isolated process. This isolation allows containerized applications to share hardware without interference, providing granular resource control and cost savings.

-

Flexibility: Kubernetes is the de facto container orchestration platform. It is supported by a large ecosystem of public and private cloud providers.

Containerized Vertica ecosystem

Vertica provides various tools and artifacts for production and development environments. The containerized Vertica ecosystem includes the following:

-

Vertica Helm chart: Helm is a Kubernetes package manager that bundles into a single package the YAML manifests that deploy Kubernetes objects. Download Vertica Helm charts from the Vertica Helm Charts Repository.

-

Custom Resource Definition (CRD): A CRD is a shared global object that extends the Kubernetes API with your custom resource types. You can use a CRD to instantiate a custom resource (CR), a deployable object with a desired state. Vertica provides CRDs that deploy and support the Eon Mode architecture on Kubernetes.

-

VerticaDB Operator: The operator is a custom controller that monitors the state of your CR and automates administrator tasks. If the current state differs from the declared state, the operator works to correct the current state.

-

Admission controller: The admission controller uses a webhook that the operator queries to verify changes to mutable states in a CR.

-

VerticaDB vlogger: The vlogger is a lightweight image used to deploy a sidecar utility container. The sidecar sends logs from vertica.log in the Vertica server container to standard output on the host node to simplify log aggregation.

-

Vertica Community Edition (CE) image: The CE image is the containerized version of the limited Enterprise Mode Vertica community edition (CE) license. The CE image provides a test environment consisting of an example database and developer tools.

In addition to the pre-built CE image, you can build a custom CE image with the tools provided in the Vertica one-node-ce GitHub repository.

-

Communal Storage Options: Vertica supports a variety of public and private cloud storage providers. For a list of supported storage providers, see Containerized environments.

-

Kafka integration: Containerized Kafka Scheduler provides a CRD and Helm chart to create and launch the Vertica Kafka scheduler, a standalone Java application that automatically consumes data from one or more Kafka topics and then loads the structured data into Vertica.

Repositories

Vertica maintains the following open source projects on GitHub:

-

vertica-kubernetes: Vertica on Kubernetes is an open source project that welcomes contributions from the Vertica community. The vertica-kubernetes GitHub repository contains all the source code for the Vertica on Kubernetes integration, and includes contributing guidelines and instructions on how to set up development workflows.

-

vcluster: vcluster is a Go library that uses a high-level REST interface to perform database operations with the Node Management Agent (NMA) and HTTPS service. The vclusterops library replaces Administration tools (admintools), a traditional command-line interface that executes administrator commands through STDIN and required SSH keys for internal node communications. The vclusterops deployment is more efficient in containerized environments than the admintools deployment.

vcluster is an open source project, so you can build custom implementations with the library. For details about migrating your existing admintools deployment to vcluster, see Upgrading Vertica on Kubernetes.

-

vertica-containers: GitHub repository that contains source code for the following container-based projects:

1 - Containerized Vertica on Kubernetes

Kubernetes is an open-source container orchestration platform that automatically manages infrastructure resources and schedules tasks for containerized applications at scale.

Kubernetes is an open-source container orchestration platform that automatically manages infrastructure resources and schedules tasks for containerized applications at scale. Kubernetes achieves automation with a declarative model that decouples the application from the infrastructure. The administrator provides Kubernetes the desired state of an application, and Kubernetes deploys the application and works to maintain that desired state. This frees the administrator to update the application as business needs evolve, without worrying about the implementation details.

An application consists of resources, which are stateful objects that you create from Kubernetes resource types. Kubernetes provides access to resource types through the Kubernetes API, an HTTP API that exposes resource types as endpoints. The most common way to create a resource is with a YAML-formatted manifest file that defines the desired state of the resource. You use the kubectl command-line tool to request a resource instance of that type from the Kubernetes API. In addition to the default resource types, you can extend the Kubernetes API and define your own resource types as a Custom Resource Definition (CRD).

To manage the infrastructure, Kubernetes uses a host to run the control plane, and designates one or more hosts as worker nodes. The control plane is a collection of services and controllers that maintain the desired state of Kubernetes objects and schedule tasks on worker nodes. Worker nodes complete tasks that the control plane assigns. Just as you can create a CRD to extend the Kubernetes API, you can create a custom controller that maintains the state of your custom resources (CR) created from the CRD.

Vertica custom resource definition and custom controller

The VerticaDB CRD extends the Kubernetes API so that you can create custom resources that deploy an Eon Mode database as a StatefulSet. In addition, Vertica provides the VerticaDB operator, a custom controller that maintains the desired state of your CR and automates lifecycle tasks. The result is a self-healing, highly-available, and scalable Eon Mode database that requires minimal manual intervention.

To simplify deployment, Vertica packages the CRD and the operator in Helm charts. A Helm chart bundles manifest files into a single package to create multiple resource type objects with a single command.

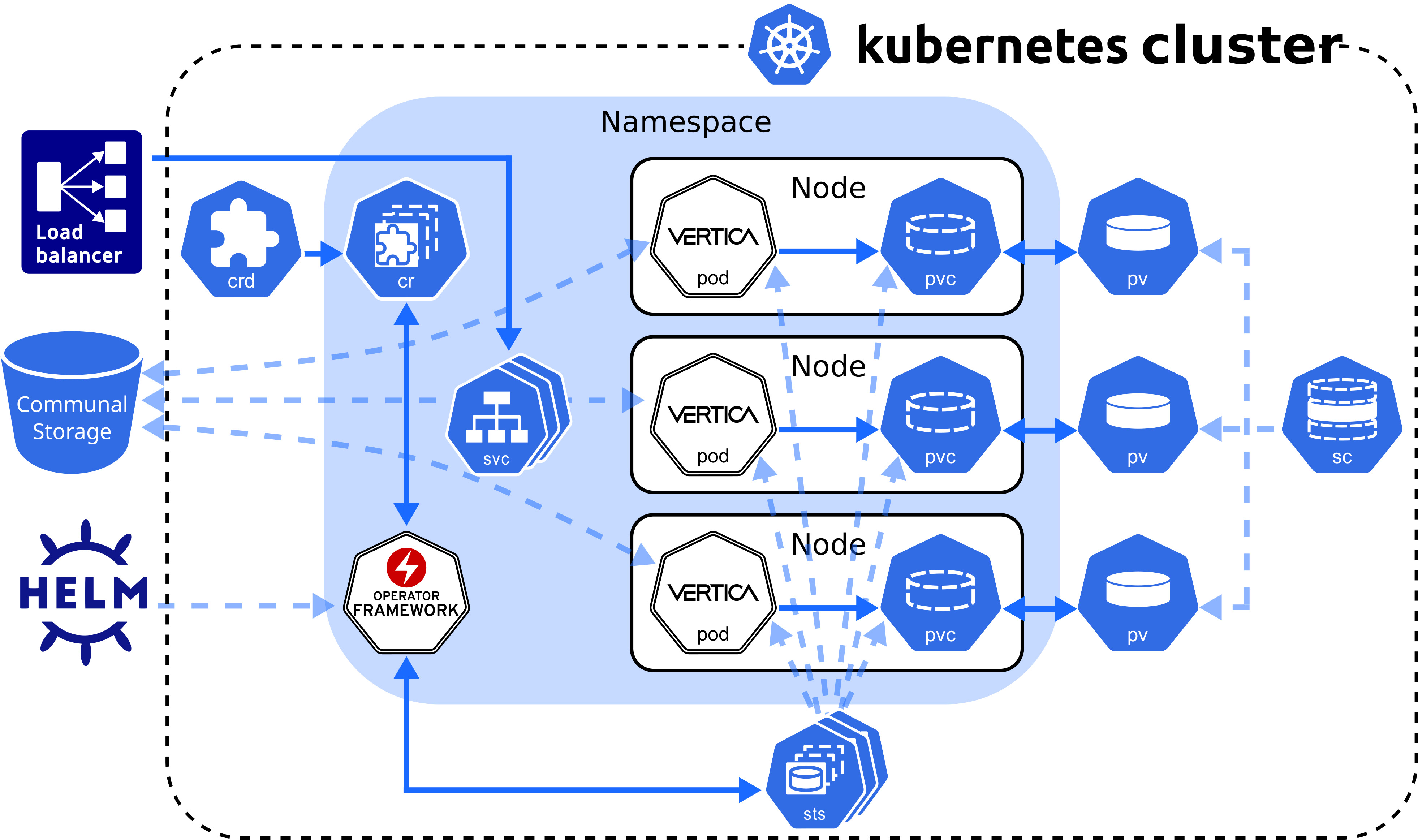

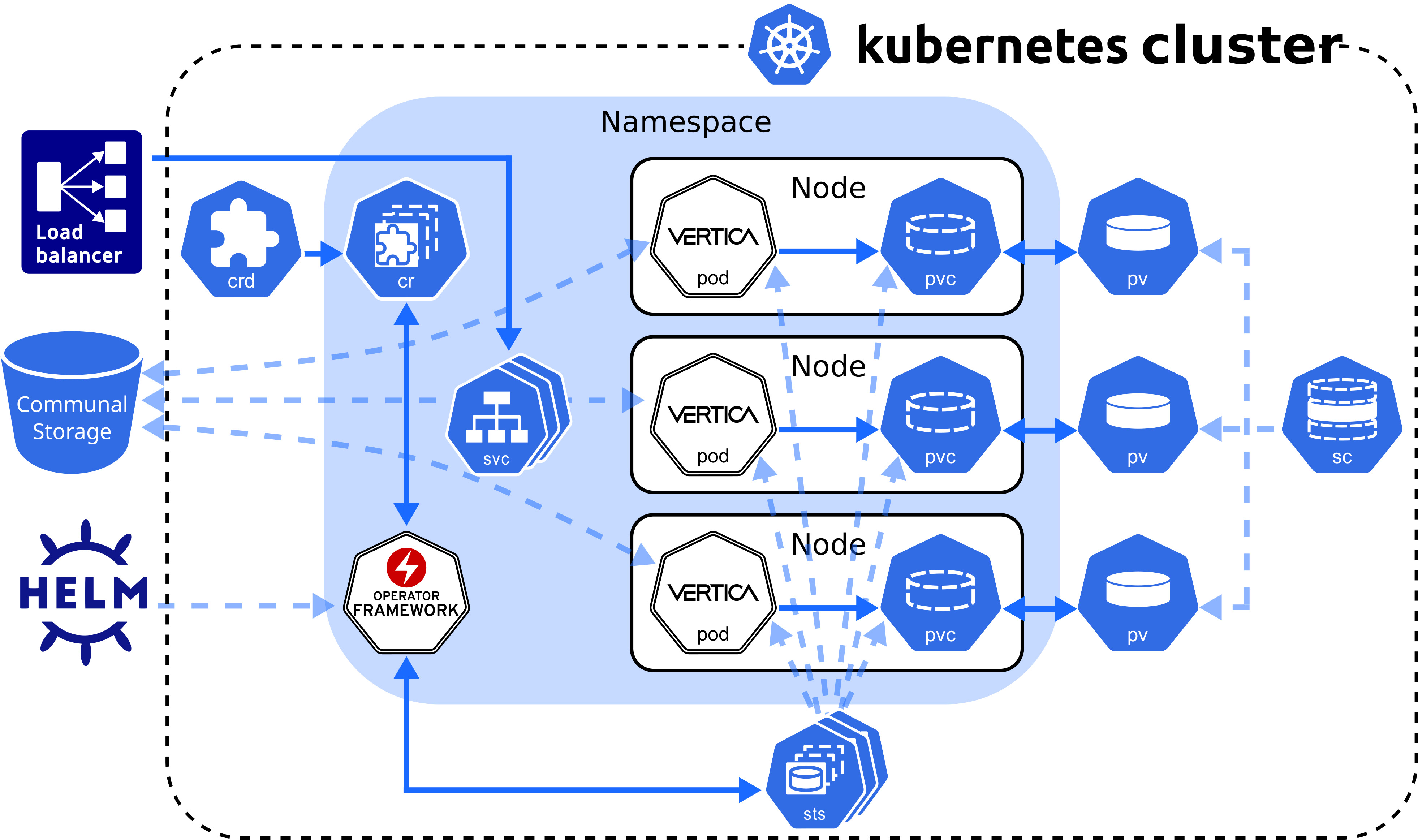

Custom resource definition architecture

The Vertica CRD creates a StatefulSet, a workload resource type that persists data with ephemeral Kubernetes objects. The following diagram describes the Vertica CRD architecture:

VerticaDB operator

The VerticaDB operator is a cluster-scoped custom controller that maintains the state of custom objects and automates administrator tasks across all namespaces in the cluster. The operator watches objects and compares their current state to the desired state declared in the custom resource. When the current state does not match the desired state, the operator works to restore the objects to the desired state.

In addition to state maintenance, the operator:

-

Installs Vertica

-

Creates an Eon Mode database

-

Upgrades Vertica

-

Revives an existing Eon Mode database

-

Restarts and reschedules DOWN pods

-

Scales subclusters

-

Manages services for pods

-

Monitors pod health

-

Handles load balancing for internal and external traffic

To validate changes to the custom resource, the operator queries the admission controller, a webhook that provides rules for mutable states in a custom resource.

Vertica makes the operator and admission controller available with Helm charts, kubectl command-line tool, or through OperatorHub.io. For details about installing the operator and the admission controller with both methods, see Installing the VerticaDB operator.

Vertica pod

A pod is essentially a wrapper around one or more logically grouped containers. A Vertica pod in the default configuration consists of two containers: the Vertica server container that runs the main Vertica process, and the Node Management Agent (NMA) container.

The NMA runs in a sidecar container, which is a container that contributes to the pod's main process, the Vertica server. The Vertica pod runs a single process per container to align each process lifetime with its container lifetime. This alignment provides the following benefits:

- Accurate health checks. A container can have only one health check, so performing a health check on a container with multiple running processes might return inaccurate results.

- Granular Kubernetes probe control. Kubernetes sets probes at the container level. If the Vertica container runs multiple processes, the NMA process might interfere with the probe that you set for the Vertica server process. This interference is not an issue with single-process containers.

- Simplified monitoring. A container with multiple processes has multiple states, which complicates monitoring. A container with a single process returns a single state.

- Easier troubleshooting. If a container runs multiple processes and crashes, it might be difficult to determine which failure caused the crash. Running one process per container makes it easier to pinpoint issues.

When a Vertica pod launches, the NMA process starts and prepares the configuration files for the Vertica server process. After the server container retrieves environment information from the NMA configuration files, the Vertica server process is ready.

All containers in the Vertica pod consume the host node resources in a shared execution environment. In addition to sharing resources, a pod extends the container to interact with Kubernetes services. For example, you can assign labels to associate pods to other objects, and you can implement affinity rules to schedule pods on specific host nodes.

DNS names provide continuity between pod lifecycles. Each pod is assigned an ordered and stable DNS name that is unique within its cluster. When a Vertica pod fails, the rescheduled pod uses the same DNS name as its predecessor. If a pod needs to persist data between lifecycles, you can mount a custom volume in its filesystem.

Rescheduled pods require information about the environment to become part of the cluster. This information is provided by the Downward API. Environment information, such as the superuser password Secret, is mounted in the /etc/podinfo directory.

NMA sidecar

The NMA sidecar exposes a REST API that the VerticaDB operator uses to administer your cluster. This container runs the same image as the Vertica server process, specified by the spec.image parameter setting in the VerticaDB custom resource definition.

The NMA sidecar is designed to consume minimal resources, but because the database size determines the amount of resources consumed by some NMA operations, there are no default resource limits. This prevents failures that result from inadequate available resources.

Running the NMA in a sidecar enables idiomatic Kubernetes logging, which sends all logs to STDOUT and STDERR on the host node. In addition, the kubectl logs command accepts a container name, so you can specify a container name during log collection.

Sidecar logger

The Vertica server process writes log messages to a catalog file named vertica.log. However, idiomatic Kubernetes practices send log messages to STDOUT and STDERR on the host node for log aggregation.

To align Vertica server logging with Kubernetes convention, Vertica provides the vertica-logger sidecar image. You can run this image in a sidecar, and it retrieves logs from vertica.log and sends them to the container's STDOUT and STDERR stream. If your sidecar logger needs to persist data, you can mount a custom volume in the filesystem.

For implementation details, see VerticaDB custom resource definition.

Persistent storage

A pod is an ephemeral, immutable object that requires access to external storage to persist data between lifecycles. To persist data, the operator uses the following API resource types:

-

StorageClass: Represents an external storage provider. You must create a StorageClass object separately from your custom resource and set this value with the local.storageClassName configuration parameter.

-

PersistentVolume (PV): A unit of storage that mounts in a pod to persist data. You dynamically or statically provision PVs. Each PV references a StorageClass.

-

PersistentVolumeClaim (PVC): The resource type that a pod uses to describe its StorageClass and storage requirements. When you delete a VerticaDB CR, its PVC is deleted.

A pod mounts a PV in its filesystem to persist data, but a PV is not associated with a pod by default. However, the pod is associated with a PVC that includes a StorageClass in its storage requirements. When a pod requests storage with a PVC, the operator observes this request and then searches for a PV that meets the storage requirements. If the operator locates a PV, it binds the PVC to the PV and mounts the PV as a volume in the pod. If the operator does not locate a PV, it must either dynamically provision one, or the administrator must manually provision one before the operator can bind it to a pod.

PVs persist data because they exist independently of the pod life cycle. When a pod fails or is rescheduled, it has no effect on the PV. When you delete a VerticaDB, the VerticaDB operator automatically deletes any PVCs associated with that VerticaDB instance.

For additional details about StorageClass, PersistentVolume, and PersistentVolumeClaim, see the Kubernetes documentation.

StorageClass requirements

The StorageClass affects how the Vertica server environment and operator function. For optimum performance, consider the following:

-

If you do not set the local.storageClassName configuration parameter, the operator uses the default storage class. If you use the default storage class, confirm that it meets storage requirements for a production workload.

-

Select a StorageClass that uses a recommended storage format type as its fsType.

-

Use dynamic volume provisioning. The operator requires on-demand volume provisioning to create PVs as needed.

Local volume mounts

The operator mounts a single PVC in the /home/dbadmin/local-data/ directory of each pod to persist data. Each of the following subdirectories is a sub-path into the volume that backs the PVC:

-

/catalog: Optional subdirectory that you can create if your environment requires a catalog location that is separate from the local data. You can customize this path with the local.catalogPath parameter.

By default, the catalog is stored in the /data subdirectory.

-

/data: Stores any temporary files, and the catalog if local.catalogPath is not set. You can customize this path with the local.dataPath parameter.

-

/depot: Improves depot warming in a rescheduled pod. You can customize this path with the local.depotPath parameter.

Note

You can change the volume type for the

/depot with the

local.depotVolume parameter. By default, this parameter is set to

PersistentVolume, and the operator creates the

/depot sub-path. If

local.depotVolume is not set to

PersistentVolume, the operator does not create the sub-path.

-

/opt/vertica/config: Persists the contents of the configuration directory between restarts.

-

/opt/vertica/log: Persists log files between pod restarts.

-

/tmp/scrutinize: Target location for the final scruitinize tar file and any additional files generated during scrutinize diagnositics collection.

Note

Kubernetes assigns each custom resource a unique identifier. The volume mount paths include the unique identifier between the mount point and the subdirectory. For example, the full path to the /data directory is /home/dbadmin/local-data/uid/data.

By default, each path mounted in the /local-data directory is owned by the user or group specified by the operator. To customize the user or group, set the podSecurityContext custom resource definition parameter.

Custom volume mounts

You might need to persist data between pod lifecycles in one of the following scenarios:

You can mount a custom volume in the Vertica pod or sidecar filesystem. To mount a custom volume in the Vertica pod, add the definition in the spec section of the CR. To mount the custom volume in the sidecar, add it in an element of the sidecars array.

The CR requires that you provide the volume type and a name for each custom volume. The CR accepts any Kubernetes volume type. The volumeMounts.name value identifies the volume within the CR, and has the following requirements and restrictions:

-

It must match the volumes.name parameter setting.

-

It must be unique among all volumes in the /local-data, /podinfo, or /licensing mounted directories.

For instructions on how to mount a custom volume in either the Vertica server container or in a sidecar, see VerticaDB custom resource definition.

Service objects

Vertica on Kubernetes provides two service objects: a headless service that requires no configuration to maintain DNS records and ordered names for each pod, and a load balancing service that manages internal traffic and external client requests for the pods in your cluster.

Load balancing services

Each subcluster uses a single load balancing service object. You can manually assign a name to a load balancing service object with the subclusters[i].serviceName parameter in the custom resource. Assigning a name is useful when you want to:

-

Direct traffic from a single client to multiple subclusters.

-

Scale subclusters by workload with more flexibility.

-

Identify subclusters by a custom service object name.

To configure the type of service object, use the subclusters[i].serviceType parameter in the custom resource to define a Kubernetes service type. Vertica supports the following service types:

-

ClusterIP: The default service type. This service provides internal load balancing, and sets a stable IP and port that is accessible from within the subcluster only.

-

NodePort: Provides external client access. You can specify a port number for each host node in the subcluster to open for client connections.

-

LoadBalancer: Uses a cloud provider load balancer to create NodePort and ClusterIP services as needed. For details about implementation, see the Kubernetes documentation and your cloud provider documentation.

Important

To prevent performance issues during heavy network traffic, Vertica recommends that you set

--proxy-mode to

iptables for your Kubernetes cluster.

Because native Vertica load balancing interferes with the Kubernetes service object, Vertica recommends that you allow the Kubernetes services to manage load balancing for the subcluster. You can configure the native Vertica load balancer within the Kubernetes cluster, but you receive unexpected results. For example, if you set the Vertica load balancing policy to ROUNDROBIN, the load balancing appears random.

For additional details about Kubernetes services, see the official Kubernetes documentation.

Security considerations

Vertica on Kubernetes supports both TLS and mTLS for communications between resource objects. You must manually configure TLS in your environment. For details, see TLS protocol.

The VerticaDB operator manages changes to the certificates. If you update an existing certificate, the operator replaces the certificate in the Vertica server container. If you add or delete a certificate, the operator reschedules the pod with the new configuration.

The subsequent sections detail internal and external connections that require TLS for secure communications.

Admission controller webhook certificates

The VerticaDB operator Helm chart includes the admission controller, a webhook that communicates with the Kubernetes API server to validate changes to a resource object. Because the API server communicates over HTTPS only, you must configure TLS certificates to authenticate communications between the API server and the webhook.

The method you use to install the VerticaDB operator determines how you manage TLS certificates for the admission controller:

- Helm charts: In the default configuration, the operator generates self-signed certificates. You can add custom certificates with the

webhook.certSource Helm chart parameter.

- kubectl: The operator generates self-signed certificates.

- OperatorHub.io: Runs on the Operator Lifecycle Manager (OLM) and automatically creates and mounts a self-signed certificate for the webhook. This installation method does not require additional action.

For details about each installation method, see Installing the VerticaDB operator.

Node Management Agent certificates

The Node Management Agent (NMA) exposes a REST API for cluster administration. By default, the NMA generates self-signed certificates that are safe for production environments, but you might require custom certificates. You can add these custom certificates with the nmaTLSSecret custom resource parameter.

Communal storage certificates

Supported storage locations authenticate requests with a self-signed certificate authority (CA) bundle. For TLS configuration details for each provider, see Configuring communal storage.

Client-server certificates

You might require multiple certificates to authenticate external client connections to the load balancing service object. You can mount one or more custom certificates in the Vertica server container with the certSecrets custom resource parameter. Each certificate is mounted in the container at /certs/cert-name/key.

For details, see VerticaDB custom resource definition.

Prometheus metrics certificates

Vertica integrates with Prometheus to scrape metrics about the VerticaDB operator and the server process. The operator and server export metrics independently from one another, and each set of metrics requires a different TLS configuration.

The operator SDK framework enforces role-based access control (RBAC) to the metrics with a proxy sidecar that uses self-signed certificates to authenticate requests for authorized service accounts. If you run Prometheus outside of Kubernetes, you cannot authenticate with a service account, so you must provide the proxy sidecar with custom TLS certificates.

The Vertica server exports metrics with the HTTPS service. This service requires client, server, and CA certificates to configure mutual mode TLS for a secure connection.

For details about both the operator and server metrics, see Prometheus integration.

System configuration

As a best practice, make system configurations on the host node so that pods inherit those settings from the host node. This strategy eliminates the need to provide each pod a privileged security context to make system configurations on the host.

To manually configure host nodes, refer to the following sections:

The superuser account—historically, the dbadmin account—must use one of the authentication techniques described in Dbadmin authentication access.

2 - Vertica images

The following table describes Vertica server and automation tool images:.

The following table describes Vertica server and automation tool images:

Creating a custom Vertica image

The Creating a Vertica Image tutorial in the Vertica Integrator's Guide provides a line-by-line description of the Dockerfile hosted on GitHub. You can add dependencies to replicate your development and production environments.

Python container UDx

The Vertica images with Python UDx development capabilities include the vertica_sdk package and the Python Standard Library.

If your UDx depends on a Python package that is not included in the image, you must make the package available to the Vertica process during runtime. You can either mount a volume that contains the package dependencies, or you can create a custom Vertica server image.

Important

When you load the UDx library with

CREATE LIBRARY, the DEPENDS clause must specify the location of the Python package in the Vertica server container filesystem.

Use the Python Package Index to download Python package source distributions.

Mounting Python libraries as volumes

You can mount a Python package dependency as a volume in the Vertica server container filesystem. A Python UDx can access the contents of the volume at runtime.

-

Download the package source distribution to the host machine.

-

On the host machine, extract the tar file contents into a mountable volume:

$ tar -xvf lib-name.version.tar.gz -C /path/to/py-dependency-vol

-

Mount the volume that contains the extracted source distribution in the custom resource (CR). The following snippet mounts the py-dependency-vol volume in the Vertica server container:

spec:

...

volumeMounts:

- name: nfs

mountPath: /path/to/py-dependency-vol

volumes:

- name: nfs

nfs:

path: /nfs

server: nfs.example.com

...

For details about mounting custom volumes in a CR, see VerticaDB custom resource definition.

Adding a Python library to a custom Vertica image

Create a custom image that includes any Python package dependencies in the Vertica server base image.

For a comprehensive guide about creating a custom Vertica image, see the Creating a Vertica Image tutorial in the Vertica Integrator's Guide.

-

Download the package source distribution on the machine that builds the container.

-

Create a Dockerfile that includes the Python source distribution. The ADD command automatically extracts the contents of the tar file into the target-dir directory:

FROM opentext/vertica-k8s:version

ADD lib-name.version.tar.gz /path/to/target-dir

...

For a complete list of available Vertica server images, see opentext/vertica-k8s Docker Hub repository.

-

Build the Dockerfile:

$ docker build . -t image-name:tag

-

Push the image to a container registry so that you can add the image to a Vertica custom resource:

$ docker image push registry-host:port/registry-username/image-name:tag

3 - VerticaDB operator

The Vertica operator automates error-prone and time-consuming tasks that a Vertica on Kubernetes administrator must otherwise perform manually.

The Vertica operator automates error-prone and time-consuming tasks that a Vertica on Kubernetes administrator must otherwise perform manually. The operator:

-

Installs Vertica

-

Creates an Eon Mode database

-

Upgrades Vertica

-

Revives an existing Eon Mode database

-

Restarts and reschedules DOWN pods

-

Scales subclusters

-

Manages services for pods

-

Monitors pod health

-

Handles load balancing for internal and external traffic

The Vertica operator is a Go binary that uses the SDK operator framework. It runs in its own pod, and is cluster-scoped to manage any resource objects in any namespace across the cluster.

For details about installing and upgrading the operator, see Installing the VerticaDB operator.

Monitoring desired state

Because the operator is cluster-scoped, each cluster is allowed one operator pod that acts as a custom controller and monitors the state of the custom resource objects within all namespaces across the cluster. The operator uses the control loop mechanism to reconcile state changes by investigating state change notifications from the custom resource instance, and periodically comparing the current state with the desired state.

If the operator detects a change in the desired state, it determines what change occurred and reconciles the current state with the new desired state. For example, if the user deletes a subcluster from the custom resource instance and successfully saves the changes, the operator deletes the corresponding subcluster objects in Kubernetes.

Validating state changes

All VerticaDB operator installation options include an admission controller, which uses a webhook to prevent invalid state changes to the custom resource. When you save a change to a custom resource, the admission controller webhook queries a REST endpoint that provides rules for mutable states in a custom resource. If a change violates the state rules, the admission controller prevents the change and returns an error. For example, it returns an error if you try to save a change that violates K-Safety.

Limitations

The operator has the following limitations:

The VerticaDB operator 2.0.0 does not use Administration tools (admintools) with API version v1. The following features require admintools commands, so they are not available with that operator version and API version configuration:

To use these features with operator 2.0.0, you must a lower server version.

3.1 - Installing the VerticaDB operator

The custom resource definition (CRD), DB operator, and admission controller work together to maintain the state of your environment and automate tasks:.

The VerticaDB operator is a custom controller that monitors CR instances to maintain the desired state of VerticaDB objects. The operator includes an admission controller, which is a webhook that queries a REST endpoint to verify changes to mutable states in a CR instance.

By default, the operator is cluster-scoped—you can deploy one operator per cluster to monitor objects across all namespaces in the cluster. For flexibility, Vertica also provides a Helm chart deployment option that installs the operator at the namespace level.

Installation options

Vertica provides the following options to install the VerticaDB operator and admission controller:

-

Helm charts. Helm is a package manager for Kubernetes. The Helm chart option is the most common installation method and lets you customize your TLS configuration and environment setup. For example, Helm chart installations include operator logging levels and log rotation policy. For details about additional options, see Helm chart parameters.

Vertica also provides the Quickstart Helm chart option so that you can get started quickly with minimal requirements.

-

kubectl installation. Apply the Custom Resource Definitions (CRDs) and VerticaDB operator directly. You can use the kubectl tool to apply the latest CRD available on vertica-kubernetes GitHub repository.

-

OperatorHub.io. This is a registry that lets vendors share Kubernetes operators.

Note

Each installation option is mutually exclusive, with its own workflow that is incompatible with the other option. For example, you cannot install the VerticaDB operator with the Helm charts, and then deploy an operator in the same environment using OperatorHub.io.

Helm charts

Important

You must have cluster administrator privileges to install the operator Helm chart.

Vertica packages the VerticaDb operator and admission controller in a Helm chart. The following sections detail different installation methods so that you can install the operator to meet your environment requirements. You can customize your operator during and after installation with Helm chart parameters.

For additional details about Helm, see the Helm documentation.

Prerequisites

Quickstart installation

The quickstart installation installs the VerticaDB Helm chart with minimal commands. This deployment installs the operator in the default configuration, which includes the following:

- Cluster-scoped webhook and controllers that monitor resources across all namespaces in the cluster. For namespace-scoped deployments, see Namespace-scoped installation.

- Self-signed certificates to communicate with the Kubernetes API server. If your environment requires custom certificates, see Custom certificate installation.

To quickly install the Helm chart, you must add the latest chart to your local repository and then install it in a namespace:

The add command downloads the chart to your local repository, and the update command gets the latest charts from the remote repository. When you add the Helm chart to your local chart repository, provide a descriptive name for future reference.

The following add command names the charts vertica-charts:

$ helm repo add vertica-charts https://vertica.github.io/charts

"vertica-charts" has been added to your repositories

$ helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "vertica-charts" chart repository

Update Complete. ⎈Happy Helming!⎈

- Install the Helm chart to deploy the VerticaDB operator in your cluster. The following command names this chart instance

vdb-op, and creates a default namespace for the operator if it does not already exist:

$ helm install vdb-op --namespace verticadb-operator --create-namespace vertica-charts/verticadb-operator

For helm install options, see the Helm documentation.

Namespace-scoped installation

By default, the VerticaDB operator is cluster-scoped. However, Vertica provides an option to install a namespace-scoped operator for environments that require more granular control over which resources an operator watches for state changes.

The VerticaDB operator includes a webhook and controllers. The webhook is cluster-scoped and verifies state changes for resources across all namespaces in the cluster. The controllers—the control loops that reconcile the current and desired states for resources—do not have a cluster-scope requirement, so you can install them at the namespace level. The namespace-scoped operator installs the webhook once at the cluster level, and then installs the controllers in the specified namespace. You can install these namespaced controllers in multiple namespaces per cluster.

Important

Do not install a mix of cluster-scoped and namespace-scoped operators in the same cluster. For example, do not install an operator with

cluster-scoped controllers, and then install a namespace-scoped operator in the same cluster. This means that multiple operators serve the same CR, which results in unpredictable behavior.

To install a namespace-scoped operator, add the latest chart to your respository and issue separate commands to deploy the webhook and controllers:

-

The add command downloads the chart to your local repository, and the update command gets the latest charts from the remote repository. When you add the Helm chart to your local chart repository, provide a descriptive name for future reference.

The following add command names the charts vertica-charts:

$ helm repo add vertica-charts https://vertica.github.io/charts

"vertica-charts" has been added to your repositories

$ helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "vertica-charts" chart repository

Update Complete. ⎈Happy Helming!⎈

-

Deploy the cluster-scoped webhook and install the required CRDs. To deploy the operator as a webhook without controllers, set controllers.enable to false. The following command deploys the webhook to the vertica namespace, which is the namespace for a Vertica cluster:

$ helm install webhook vertica-charts/verticadb-operator --namespace vertica --set controllers.enable=false

-

Deploy the namespace-scoped operator. To prevent a second webhook installation, set webhook.enable to false. To deploy only the controllers, set controllers.scope to namespace. The following command installs the operator in the default namespace:

$ helm install vdb-op vertica-charts/verticadb-operator --namespace default --set webhook.enable=false,controllers.scope=namespace

For details about the controllers.* parameter settings, see Helm chart parameters. For helm install options, see the Helm documentation.

Custom certificate installation

The admission controller uses a webhook that communicates with the Kubernetes API over HTTPS. By default, the Helm chart generates a self-signed certificate before installing the admission controller. A self-signed certificate might not be suitable for your environment—you might require custom certificates that are signed by a trusted third-party certificate authority (CA).

To add custom certificates for the webhook:

-

Set the TLS key's Subjective Alternative Name (SAN) to the admission controller's fully-qualified domain name (FQDN). Set the SAN in a configuration file using the following format:

[alt_names]

DNS.1 = verticadb-operator-webhook-service.operator-namespace.svc

DNS.2 = verticadb-operator-webhook-service.operator-namespace.svc.cluster.local

-

Create a Secret that contains the certificates. A Secret conceals your certificates when you pass them as command-line parameters.

The following command creates a Secret named tls-secret. It stores the TLS key, TLS certificate, and CA certificate:

$ kubectl create secret generic tls-secret --from-file=tls.key=/path/to/tls.key --from-file=tls.crt=/path/to/tls.crt --from-file=ca.crt=/path/to/ca.crt

-

Install the Helm chart.

The add command downloads the chart to your local repository, and the update command gets the latest charts from the remote repository. When you add the Helm chart to your local chart repository, provide a descriptive name for future reference.

The following add command names the charts vertica-charts:

$ helm repo add vertica-charts https://vertica.github.io/charts

"vertica-charts" has been added to your repositories

$ helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "vertica-charts" chart repository

Update Complete. ⎈Happy Helming!⎈

When you install the Helm chart with custom certificates for the admission controller, you have to use the webhook.certSource and webhook.tlsSecret Helm chart parameters:

webhook.certSource indicates whether you want the admission controller to install user-provided certificates. To install with custom certificates, set this parameter to secret.webhook.tlsSecret accepts a Secret that contains your certificates.

The following command deploys the operator with the TLS certificates and creates namespace if it does not already exist:

$ helm install operator-name --namespace namespace --create-namespace vertica-charts/verticadb-operator \

--set webhook.certSource=secret \

--set webhook.tlsSecret=tls-secret

Granting user privileges

After the operator is deployed, the cluster administrator is the only user with privileges to create and modify VerticaDB CRs within the cluster. To grant other users the privileges required to work with custom resources, you can leverage namespaces and Kubernetes RBAC.

To grant these privileges, the cluster administrator creates a namespace for the user, then grants that user edit ClusterRole within that namespace. Next, the cluster administrator creates a Role with specific CR privileges, and binds that role to the user with a RoleBinding. The cluster administrator can repeat this process for each user that must create or modify VerticaDB CRs within the cluster.

To provide a user with privileges to create or modify a VerticaDB CR:

-

Create a namespace for the application developer:

$ kubectl create namespace user-namespace

namespace/user-namespace created

-

Grant the application developer edit role privileges in the namespace:

$ kubectl create --namespace user-namespace rolebinding edit-access --clusterrole=edit --user=username

rolebinding.rbac.authorization.k8s.io/edit-access created

-

Create the Role with privileges to create and modify any CRs in the namespace. Vertica provides the verticadb-operator-cr-user-role.yaml file that defines these rules:

$ kubectl --namespace user-namespace apply -f https://github.com/vertica/vertica-kubernetes/releases/latest/download/verticadb-operator-cr-user-role.yaml

role.rbac.authorization.k8s.io/vertica-cr-user-role created

Verify the changes with kubectl get:

$ kubectl get roles --namespace user-namespace

NAME CREATED AT

vertica-cr-user-role 2023-11-30T19:37:24Z

-

Create a RoleBinding that associates this Role to the user. The following command creates a RoleBinding named vdb-access:

$ kubectl create --namespace user-namespace rolebinding vdb-access --role=vertica-cr-user-role --user=username

rolebinding.rbac.authorization.k8s.io/rolebinding created

Verify the changes with kubectl get:

$ kubectl get rolebinding --namespace user-namespace

NAME ROLE AGE

edit-access ClusterRole/edit 16m

vdb-access Role/vertica-cr-user-role 103s

Now, the user associated with username has access to create and modify VerticaDB CRs in the isolated user-namespace.

kubectl installation

You can install the VerticaDB operator from GitHub by applying the YAML manifests with the kubectl command-line tool:

Install all Custom resource definitions. Because the size of the CRD is too large for client-side operations, you must use the server-side=true and --force-conflicts options to apply the manifests:

kubectl apply --server-side=true --force-conflicts -f https://github.com/vertica/vertica-kubernetes/releases/latest/download/crds.yaml

For additional details about these commands, see Server-Side Apply documentation.

- Install the VerticaDB operator:

$ kubectl apply -f https://github.com/vertica/vertica-kubernetes/releases/latest/download/operator.yaml

OperatorHub.io

OperatorHub.io is a registry that allows vendors to share Kubernetes operators. Each vendor must adhere to packaging guidelines to simplify user adoption.

To install the VerticaDB operator from OperatorHub.io, navigate to the Vertica operator page and follow the install instructions.

3.2 - Upgrading the VerticaDB operator

Vertica supports two separate options to upgrade the VerticaDB operator:.

Vertica supports two separate options to upgrade the VerticaDB operator:

-

OperatorHub.io

-

Helm Charts

Note

You must upgrade the operator with the same option that you selected for installation. For example, you cannot install the VerticaDB operator with Helm charts, and then upgrade the operator in the same environment using OperatorHub.io.

Prerequisites

OperatorHub.io

Important

VerticaDB operator versions 1.x are namespace-scoped, and versions 2.x are cluster-scoped. To upgrade from version 1.x to 2.x, you must uninstall the operator in each namespace before you upgrade.

For detailed instructions about uninstalling your operator, see the OLM documentation.

The Operator Lifecycle Manager (OLM) operator manages upgrades for OperatorHub.io installations. You can configure the OLM operator to upgrade the VerticaDB operator manually or automatically with the Subscription object's spec.installPlanApproval parameter.

Automatic upgrade

To configure automatic version upgrades, set spec.installPlanApproval to Automatic, or omit the setting entirely. When the OLM operator refreshes the catalog source, it installs the new VerticaDB operator automatically.

Manual upgrade

Upgrade the VerticaDB operator manually to approve version upgrades for specific install plans. To manually upgrade, set spec.installPlanApproval parameter to Manual and complete the following:

-

Verify if there is an install plan that requires approval to proceed with the upgrade:

$ kubectl get installplan

NAME CSV APPROVAL APPROVED

install-ftcj9 verticadb-operator.v1.7.0 Manual false

install-pw7ph verticadb-operator.v1.6.0 Manual true

The command output shows that the install plan install-ftcj9 for VerticaDB operator version 1.7.0 is not approved.

-

Approve the install plan with a patch command:

$ kubectl patch installplan install-ftcj9 --type=merge --patch='{"spec": {"approved": true}}'

installplan.operators.coreos.com/install-ftcj9 patched

After you set the approval, the OLM operator silently upgrades the VerticaDB operator.

-

Optional. To monitor its progress, inspect the STATUS column of the Subscription object:

$ kubectl describe subscription subscription-object-name

Helm charts

You must have cluster administrator privileges to upgrade the VerticaDB operator with Helm charts.

The Helm chart includes the CRD, but the helm install command does not overwrite an existing CRD. To upgrade the operator, you must update the CRD with the manifest from the GitHub repository.

Additionally, you must upgrade all custom resource definitions, even if you do deploy them in your environment. These CRDs are installed with the operator and maintained as separate YAML manifests. Upgrading all CRDs ensure that your operator is upgraded completely.

Important

VerticaDB operator versions 1.x are namespace-scoped, and versions 2.x are cluster-scoped. To upgrade from version 1.x to 2.x, you must uninstall the operator in each namespace before you upgrade.

You can uninstall the VerticaDB operator with the helm uninstall command:

$ helm uninstall vdb-op --namespace namespace

You can upgrade the CRDs and VerticaDB operator from GitHub by applying the YAML manifests with the kubectl command-line tool:

-

Install all Custom resource definitions. Because the size of the CRD is too large for client-side operations, you must use the server-side=true and --force-conflicts options to apply the manifests:

kubectl apply --server-side=true --force-conflicts -f https://github.com/vertica/vertica-kubernetes/releases/latest/download/crds.yaml

For additional details about these commands, see Server-Side Apply documentation.

-

Upgrade the Helm chart:

$ helm upgrade operator-name --wait vertica-charts/verticadb-operator

3.3 - Helm chart parameters

The following table describes the available settings for the VerticaDB operator and admission controller Helm chart.

The following list describes the available settings for the VerticaDB operator and admission controller Helm chart:

affinity- Applies rules that constrain the VerticaDB operator to specific nodes. It is more expressive than

nodeSelector. If this parameter is not set, then the operator uses no affinity setting.

controllers.enable- Determines whether controllers are enabled when running the operator. Controllers watch and act on custom resources within the cluster.

For namespace-scoped operators, set this to false. This deploys the cluster-scoped operator only as a webhook, and then you can set webhook.enable to false and deploy the controllers to an individual namespace. For details, see Installing the VerticaDB operator.

Default: true

controllers.scope- Scope of the controllers in the VerticaDB operator. Controllers watch and act on custom resources within the cluster. This parameter accepts the following values:

cluster: The controllers watch for changes to all resources across all namespaces in the cluster.namespace: The controllers watch for changes to resources only in the namespace specified during deployment. You must deploy the operator as a webhook for the cluster, then deploy the operator controllers in a namespace. You can deploy multiple namespace-scoped operators within the same cluster.

For details, see Installing the VerticaDB operator.

Default: cluster

image.name- Name of the image that runs the operator.

Default: vertica/verticadb-operator:version

imagePullSecrets- List of Secrets that store credentials to authenticate to the private container repository specified by

image.repo and rbac_proxy_image. For details, see Specifying ImagePullSecrets in the Kubernetes documentation.

image.repo- Server that hosts the repository that contains

image.name. Use this parameter for deployments that require control over a private hosting server, such as an air-gapped operator.

Use this parameter with rbac_proxy_image.name and rbac_proxy_image.repo.

Default: docker.io

logging.filePath-

Deprecated

This parameter is deprecated and will be removed in a future release.

Path to a log file in the VerticaDB operator filesystem. If this value is not specified, Vertica writes logs to standard output.

Default: Empty string (' ') that indicates standard output.

logging.level- Minimum logging level. This parameter accepts the following values:

Default: info

logging.maxFileSize-

Deprecated

This parameter is deprecated and will be removed in a future release.

When logging.filePath is set, the maximum size in MB of the logging file before log rotation occurs.

Default: 500

logging.maxFileAge-

Deprecated

This parameter is deprecated and will be removed in a future release.

When logging.filePath is set, the maximum age in days of the logging file before log rotation deletes the file.

Default: 7

logging.maxFileRotation-

Deprecated

This parameter is deprecated and will be removed in a future release.

When logging.filePath is set, the maximum number of files that are kept in rotation before the old ones are removed.

Default: 3

nameOverride- Sets the prefix for the name assigned to all objects that the Helm chart creates.

If this parameter is not set, each object name begins with the name of the Helm chart, verticadb-operator.

nodeSelector- Controls which nodes are used to schedule the operator pod. If this is not set, the node selector is omitted from the operator pod when it is created. To set this parameter, provide a list of key/value pairs.

The following example schedules the operator only on nodes that have the region=us-east label:

nodeSelector:

region: us-east

priorityClassName- PriorityClass name assigned to the operator pod. This affects where the pod is scheduled.

prometheus.createProxyRBAC- When set to true, creates role-based access control (RBAC) rules that authorize access to the operator's

/metrics endpoint for the Prometheus integration.

Default: true

prometheus.createServiceMonitor-

Deprecated

This parameter is deprecated and will be removed in a future release.

When set to true, creates the ServiceMonitor custom resource for the Prometheus operator. You must install the Prometheus operator before you set this to true and install the Helm chart.

For details, see the Prometheus operator GitHub repository.

Default: false

prometheus.expose- Configures the operator's

/metrics endpoint for the Prometheus integration. The following options are valid:

-

EnableWithAuthProxy: Creates a new service object that exposes an HTTPS /metrics endpoint. The RBAC proxy controls access to the metrics.

-

EnableWithoutAuth: Creates a new service object that exposes an HTTP /metrics endpoint that does not authorize connections. Any client with network access can read the metrics.

-

Disable: Prometheus metrics are not exposed.

Default: Disable

prometheus.tlsSecret- Secret that contains the TLS certificates for the Prometheus

/metrics endpoint. You must create this Secret in the same namespace that you deployed the Helm chart.

The Secret requires the following values:

To ensure that the operator uses the certificates in this parameter, you must set prometheus.expose to EnableWithAuthProxy.

If prometheus.expose is not set to EnableWithAuthProxy, then this parameter is ignored, and the RBAC proxy sidecar generates its own self-signed certificate.

rbac_proxy_image.name- Name of the Kubernetes RBAC proxy image that performs authorization. Use this parameter for deployments that require authorization by a proxy server, such as an air-gapped operator.

Use this parameter with image.repo and rbac_proxy_image.repo.

Default: kubebuilder/kube-rbac-proxy:v0.11.0

rbac_proxy_image.repo- Server that hosts the repository that contains

rbac_proxy_image.name. Use this parameter for deployments that perform authorization by a proxy server, such as an air-gapped operator.

Use this parameter with image.repo and rbac_proxy_image.name.

Default: gcr.io

reconcileConcurrency.verticaautoscaler- Number of concurrent reconciliation loops the operator runs for all VerticaAutoscaler CRs in the cluster.

reconcileConcurrency.verticadb- Number of concurrent reconciliation loops the operator runs for all VerticaDB CRs in the cluster.

reconcileConcurrency.verticaeventtrigger- Number of concurrent reconciliation loops the operator runs for all EventTrigger CRs in the cluster.

resources.limits and resources.requests- The resource requirements for the operator pod.

resources.limits is the maximum amount of CPU and memory that an operator pod can consume from its host node.

resources.requests is the maximum amount of CPU and memory that an operator pod can request from its host node.

Defaults:

resources:

limits:

cpu: 100m

memory: 750Mi

requests:

cpu: 100m

memory: 20Mi

serviceAccountAnnotations- Map of annotations that is added to the service account created for the operator.

serviceAccountNameOverride- Controls the name of the service account created for the operator.

tolerations- Any taints and tolerations that influence where the operator pod is scheduled.

webhook.certSource- How TLS certificates are provided for the admission controller webhook. This parameter accepts the following values:

-

internal: The VerticaDB operator internally generates a self-signed, 10-year expiry certificate before starting the managing controller. When the certificate expires, you must manually restart the operator pod to create a new certificate.

-

secret: You generate the custom certificates before you create the Helm chart and store them in a Secret. This option requires that you set webhook.tlsSecret.

If webhook.tlsSecret is set, then this option is implicitly selected.

Default: internal

For details, see Installing the VerticaDB operator.

webhook.enable- Determines whether the Helm chart installs the admission controller webhooks for the custom resource definitions. The webhook is cluster-scoped, and you can install only one webhook per cluster.

If your environment uses namespace-scoped operators, you must install the webhook for the cluster, then disable the webhook for each namespace installation. For details, see Installing the VerticaDB operator.

Caution

Webhooks prevent invalid state changes to the custom resource. Running Vertica on Kubernetes without webhook validations might result in invalid state transitions.

Default: true

webhook.tlsSecret- Secret that contains a PEM-encoded certificate authority (CA) bundle and its keys.

The CA bundle validates the webhook's server certificate. If this is not set, the webhook uses the system trust roots on the apiserver.

This Secret includes the following keys for the CA bundle:

3.4 - Red Hat OpenShift integration

Red Hat OpenShift is a hybrid cloud platform that provides enhanced security features and greater control over the Kubernetes cluster.

Red Hat OpenShift is a hybrid cloud platform that provides enhanced security features and greater control over the Kubernetes cluster. In addition, OpenShift provides the OperatorHub, a catalog of operators that meet OpenShift requirements.

For comprehensive instructions about the OpenShift platform, refer to the Red Hat OpenShift documentation.

Note

If your Kubernetes cluster is in the cloud or on a managed service, each Vertica node must operate in the same availability zone.

Enhanced security with security context constraints

To enforce security measures, OpenShift requires that each deployment use a security context constraint (SCC). Vertica on Kubernetes supports the restricted-v2 SCC, the most restrictive default SCC available.

The SCC lets administrators control the privileges of the pods in a cluster without manual configuration. For example, you can restrict namespace access for specific users in a multi-user environment.

Installing the operator

The VerticaDB operator is a community operator that is maintained by Vertica. Each operator available in the OperatorHub must adhere to requirements defined by the Operator Lifecycle Manager (OLM). To meet these requirements, vendors must provide a cluster service version (CSV) manifest for each operator. Vertica provides a CSV for each version of the VerticaDB operator available in the OpenShift OperatorHub.

The VerticaDB operator supports OpenShift versions 4.8 and higher.

You must have cluster-admin privileges on your OpenShift account to install the VerticaDB operator. For detailed installation instructions, refer to the OpenShift documentation.

Deploying Vertica on OpenShift

After you installed the VerticaDB operator and added a supported SCC to your Vertica workloads service account, you can deploy Vertica on OpenShift.

For details about installing OpenShift in supported environments, see the OpenShift Container Platform installation overview.

Before you deploy Vertica on OpenShift, create the required Secrets to store sensitive information. For details about Secrets and OpenShift, see the OpenShift documentation. For guidance on deploying a Vertica custom resource, see VerticaDB custom resource definition.

3.5 - Prometheus integration

Vertica on Kubernetes integrates with Prometheus to scrape time series metrics about the VerticaDB operator.

Vertica on Kubernetes integrates with Prometheus to scrape time series metrics about the VerticaDB operator and Vertica server process. These metrics create a detailed model of your application over time to provide valuable performance and troubleshooting insights as well as facilitate internal and external communications and service discovery in microservice and containerized architectures.

Prometheus requires that you set up targets—metrics that you want to monitor. Each target is exposed on an endpoint, and Prometheus periodically scrapes that endpoint to collect target data. Vertica exports metrics and provides access methods for both the VerticaDB operator and server process.

Server metrics

Vertica exports server metrics on port 8443 at the following endpoint:

https://host-address:8443/api-version/metrics

Only the superuser can authenticate to the HTTPS service, and the service accepts only mutual TLS (mTLS) authentication. The setup for both Vertica on Kubernetes and non-containerized Vertica environments is identical. For details, see HTTPS service.

Vertica on Kubernetes lets you set a custom port for its HTTP service with the subclusters[i].verticaHTTPNodePort custom resource parameter. This parameter sets a custom port for the HTTPS service for NodePort serviceTypes.

For request and response examples, see the /metrics endpoint description. For a list of available metrics, see Prometheus metrics.

Grafana dashboards

You can visualize Vertica server time series metrics with Grafana dashboards. Vertica dashboards that use a Prometheus data source are available at Grafana Dashboards:

You can also download the source for each dashboard from the vertica/grafana-dashboards repository.

Operator metrics

The VerticaDB operator supports the Operator SDK framework, which requires that an authorization proxy impose role-based-access control (RBAC) to access operator metrics over HTTPS. To increase flexibility, Vertica provides the following options to access the Prometheus /metrics endpoint:

-

HTTPS access: Meet operator SDK requirements and use a sidecar container as an RBAC proxy to authorize connections.

-

HTTP access: Expose the /metrics endpoint to external connections without RBAC. Any client with network access can read from /metrics.

-

Disable Prometheus entirely.

Vertica provides Helm chart parameters and YAML manifests to configure each option.

Note

If you installed the VerticaDB operator with

OperatorHub.io, you can use the Prometheus integration with the default Helm chart settings. OperatorHub.io installations cannot configure any Helm chart parameters.

Prerequisites

HTTPS with RBAC

The operator SDK framework requires that operators use an authorization proxy for metrics access. Because the operator sends metrics to localhost only, Vertica meets these requirements with a sidecar container with localhost access that enforces RBAC.

RBAC rules are cluster-scoped, and the sidecar authorizes connections from clients associated with a service account that has the correct ClusterRole and ClusterRoleBindings. Vertica provides the following example manifests:

For additional details about ClusterRoles and ClusterRoleBindings, see the Kubernetes documentation.

Create RBAC rules

Note

This section details how to create RBAC rules for environments that require that you set up ClusterRole and ClusterRoleBinding objects outside of the Helm chart installation.

The following steps create the ClusterRole and ClusterRoleBindings objects that grant access to the /metrics endpoint to a non-Kubernetes resource such as Prometheus. Because RBAC rules are cluster-scoped, you must create or add to an existing ClusterRoleBinding:

-

Create a ClusterRoleBinding that binds the role for the RBAC sidecar proxy with a service account:

-

Create a ClusterRoleBinding that binds the role for the non-Kubernetes object to the RBAC sidecar proxy service account:

When you install the Helm chart, the ClusterRole and ClusterRoleBindings are created automatically. By default, the prometheus.expose parameter is set to EnableWithProxy, which creates the service object and exposes the operator's /metrics endpoint.

For details about creating a sidecar container, see VerticaDB custom resource definition.

Service object

Vertica provides a service object verticadb-operator-metrics-service to access the Prometheus /metrics endpoint. The VerticaDB operator does not manage this service object. By default, the service object uses the ClusterIP service type to support RBAC.

Connect to the /metrics endpoint at port 8443 with the following path:

https://verticadb-operator-metrics-service.namespace.svc.cluster.local:8443/metrics

Bearer token authentication

Kubernetes authenticates requests to the API server with service account credentials. Each pod is associated with a service account and has the following credentials stored in the filesystem of each container in the pod:

Use these credentials to authenticate to the /metrics endpoint through the service object. You must use the credentials for the service account that you used to create the ClusterRoleBindings.

For example, the following cURL request accesses the /metrics endpoint. Include the --insecure option only if you do not want to verify the serving certificate:

$ curl --insecure --cacert /var/run/secrets/kubernetes.io/serviceaccount/ca.crt -H "Authorization: Bearer $(cat /var/run/secrets/kubernetes.io/serviceaccount/token)" https://verticadb-operator-metrics-service.vertica:8443/metrics

For additional details about service account credentials, see the Kubernetes documentation.

TLS client certificate authentication

Some environments might prevent you from authenticating to the /metrics endpoint with the service account token. For example, you might run Prometheus outside of Kubernetes. To allow external client connections to the /metrics endpoint, you have to supply the RBAC proxy sidecar with TLS certificates.

You must create a Secret that contains the certificates, and then use the prometheus.tlsSecret Helm chart parameter to pass the Secret to the RBAC proxy sidecar when you install the Helm chart. The following steps create the Secret and install the Helm chart:

-

Create a Secret that contains the certificates:

$ kubectl create secret generic metrics-tls --from-file=tls.key=/path/to/tls.key --from-file=tls.crt=/path/to/tls.crt --from-file=ca.crt=/path/to/ca.crt

-

Install the Helm chart with prometheus.tlsSecret set to the Secret that you just created:

$ helm install operator-name --namespace namespace --create-namespace vertica-charts/verticadb-operator \

--set prometheus.tlsSecret=metrics-tls

The prometheus.tlsSecret parameter forces the RBAC proxy to use the TLS certificates stored in the Secret. Otherwise, the RBAC proxy sidecar generates its own self-signed certificate.

After you install the Helm chart, you can authenticate to the /metrics endpoint with the certificates in the Secret. For example:

$ curl --key tls.key --cert tls.crt --cacert ca.crt https://verticadb-operator-metrics-service.vertica.svc:8443/metrics

HTTP access

You might have an environment that does not require privileged access to Prometheus metrics. For example, you might run Prometheus outside of Kubernetes.

To allow external access to the /metrics endpoint with HTTP, set prometheus.expose to EnableWithoutAuth. For example:

$ helm install operator-name --namespace namespace --create-namespace vertica-charts/verticadb-operator \

--set prometheus.expose=EnableWithoutAuth

Service object

Vertica provides a service object verticadb-operator-metrics-service to access the Prometheus /metrics endpoint. The VerticaDB operator does not manage this service object. By default, the service object uses the ClusterIP service type, so you must change the serviceType for external client access. The service object's fully-qualified domain name (FQDN) is as follows:

verticadb-operator-metrics-service.namespace.svc.cluster.local

Connect to the /metrics endpoint at port 8443 with the following path:

http://verticadb-operator-metrics-service.namespace.svc.cluster.local:8443/metrics

Prometheus operator integration (optional)

Vertica on Kubernetes integrates with the Prometheus operator, which provides custom resources (CRs) that simplify targeting metrics. Vertica supports the ServiceMonitor CR that discovers the VerticaDB operator automatically, and authenticates requests with a bearer token.

The ServiceMonitor CR is available as a release artifact in our GitHub repository. See Helm chart parameters for details about the prometheus.createServiceMonitor parameter.

Disabling Prometheus

To disable Prometheus, set the prometheus.expose Helm chart parameter to Disable:

$ helm install operator-name --namespace namespace --create-namespace vertica-charts/verticadb-operator \

--set prometheus.expose=Disable

For details about Helm install commands, see Installing the VerticaDB operator.

Metrics

The following table describes the available VerticaDB operator metrics:

|

Name |

Type |

Description |

controller_runtime_active_workers |

gauge |

Number of currently used workers per controller. |

controller_runtime_max_concurrent_reconciles |

gauge |

Maximum number of concurrent reconciles per controller. |

controller_runtime_reconcile_errors_total |

counter |

Total number of reconciliation errors per controller. |

controller_runtime_reconcile_time_seconds |

histogram |

Length of time per reconciliation per controller. |

controller_runtime_reconcile_total |

counter |

Total number of reconciliations per controller. |

controller_runtime_webhook_latency_seconds |

histogram |

Histogram of the latency of processing admission requests. |

controller_runtime_webhook_requests_in_flight |

gauge |

Current number of admission requests being served. |

controller_runtime_webhook_requests_total |

counter |

Total number of admission requests by HTTP status code. |

go_gc_duration_seconds |

summary |

A summary of the pause duration of garbage collection cycles. |

go_goroutines |

gauge |

Number of goroutines that currently exist. |

go_info |

gauge |

Information about the Go environment. |

go_memstats_alloc_bytes |

gauge |

Number of bytes allocated and still in use. |

go_memstats_alloc_bytes_total |

counter |

Total number of bytes allocated, even if freed. |

go_memstats_buck_hash_sys_bytes |

gauge |

Number of bytes used by the profiling bucket hash table. |

go_memstats_frees_total |

counter |

Total number of frees. |

go_memstats_gc_sys_bytes |

gauge |

Number of bytes used for garbage collection system metadata. |

go_memstats_heap_alloc_bytes |

gauge |

Number of heap bytes allocated and still in use. |

go_memstats_heap_idle_bytes |

gauge |

Number of heap bytes waiting to be used. |

go_memstats_heap_inuse_bytes |

gauge |

Number of heap bytes that are in use. |

go_memstats_heap_objects |

gauge |

Number of allocated objects. |

go_memstats_heap_released_bytes |

gauge |

Number of heap bytes released to OS. |

go_memstats_heap_sys_bytes |

gauge |

Number of heap bytes obtained from system. |

go_memstats_last_gc_time_seconds |

gauge |

Number of seconds since 1970 of last garbage collection. |

go_memstats_lookups_total |

counter |

Total number of pointer lookups. |

go_memstats_mallocs_total |

counter |

Total number of mallocs. |

go_memstats_mcache_inuse_bytes |

gauge |

Number of bytes in use by mcache structures. |

go_memstats_mcache_sys_bytes |

gauge |

Number of bytes used for mcache structures obtained from system. |

go_memstats_mspan_inuse_bytes |

gauge |

Number of bytes in use by mspan structures. |

go_memstats_mspan_sys_bytes |

gauge |

Number of bytes used for mspan structures obtained from system. |

go_memstats_next_gc_bytes |

gauge |

Number of heap bytes when next garbage collection will take place. |

go_memstats_other_sys_bytes |

gauge |

Number of bytes used for other system allocations. |

go_memstats_stack_inuse_bytes |

gauge |

Number of bytes in use by the stack allocator. |

go_memstats_stack_sys_bytes |

gauge |

Number of bytes obtained from system for stack allocator. |

go_memstats_sys_bytes |

gauge |

Number of bytes obtained from system. |

go_threads |

gauge |

Number of OS threads created. |

process_cpu_seconds_total |

counter |

Total user and system CPU time spent in seconds. |

process_max_fds |

gauge |

Maximum number of open file descriptors. |

process_open_fds |

gauge |

Number of open file descriptors. |

process_resident_memory_bytes |

gauge |

Resident memory size in bytes. |

process_start_time_seconds |

gauge |

Start time of the process since unix epoch in seconds. |

process_virtual_memory_bytes |

gauge |

Virtual memory size in bytes. |

process_virtual_memory_max_bytes |

gauge |

Maximum amount of virtual memory available in bytes. |

vertica_cluster_restart_attempted_total |

counter |

The number of times we attempted a full cluster restart. |

vertica_cluster_restart_failed_total |

counter |

The number of times we failed when attempting a full cluster restart. |

vertica_cluster_restart_seconds |

histogram |

The number of seconds it took to do a full cluster restart. |

vertica_nodes_restart_attempted_total |

counter |

The number of times we attempted to restart down nodes. |

vertica_nodes_restart_failed_total |

counter |

The number of times we failed when trying to restart down nodes. |

vertica_nodes_restart_seconds |

histogram |

The number of seconds it took to restart down nodes. |

vertica_running_nodes_count |

gauge |

The number of nodes that have a running pod associated with it. |

vertica_subclusters_count |

gauge |

The number of subclusters that exist. |

vertica_total_nodes_count |

gauge |

The number of nodes that currently exist. |

vertica_up_nodes_count |

gauge |

The number of nodes that have vertica running and can accept connections. |

vertica_upgrade_total |

counter |

The number of times the operator performed an upgrade caused by an image change. |

workqueue_adds_total |

counter |

Total number of adds handled by workqueue. |

workqueue_depth |

gauge |

Current depth of workqueue. |

workqueue_longest_running_processor_seconds |

gauge |

How many seconds has the longest running processor for workqueue been running. |

workqueue_queue_duration_seconds |

histogram |

How long in seconds an item stays in workqueue before being requested. |

workqueue_retries_total |

counter |

Total number of retries handled by workqueue. |

workqueue_unfinished_work_seconds |

gauge |

How many seconds of work has been done that is in progress and hasn't been observed by work_duration. Large values indicate stuck threads. One can deduce the number of stuck threads by observing the rate at which this increases. |

workqueue_work_duration_seconds |

histogram |

How long in seconds processing an item from workqueue takes. |

3.6 - Secrets management

The Kubernetes declarative model requires that you develop applications with manifest files or command line interactions with the Kubernetes API. These workflows expose your sensitive information in your application code and shell history, which compromises your application security.

To mitigate any security risks, Kubernetes uses the concept of a secret to store this sensitive information. A secret is an object with a plain text name and a value stored as a base64 encoded string. When you reference a secret by name, Kubernetes retrieves and decodes its value. This lets you openly reference confidential information in your application code and shell without compromising your data.

Kubernetes supports secret workflows with its native Secret object, and cloud providers offer solutions that store your confidential information in a centralized location for easy management. By default, Vertica on Kubernetes supports native Secrets objects, and it also supports cloud solutions so that you have options for storing your confidential data.

For best practices about handling confidential data in Kubernetes, see the Kubernetes documentation.

Manually encode data

In some circumstances, you might need to manually base64 encode your secret value and add it to a Secret manifest or a cloud service secret manager. You can base64 encode data with tools available in your shell. For example, pass the string value to the echo command, and pipe the output to the base64 command to encode the value. In the echo command, include the -n option so that it does not append a newline character:

$ echo -n 'secret-value' | base64

c2VjcmV0LXZhbHVl

You can take the output of this command and add it to a Secret manifest or cloud service secret manager.

Kubernetes Secrets

A Secret is an Kubernetes object that you can reference by name that conceals confidential data in a base64 encoded string. For example, you can create a Secret named su-password that stores the database superuser password. In a manifest file, you can add su-password in place of the literal password value, and then you can safely store the manifest in a file system or pass it on the command line.

The idiomatic way to create a Secret in Kubernetes is with the kubectl command-line tool's create secret command, which provides options to create Secret object from various data sources. For example, the following command creates a Secret named superuser-password from a literal value passed on the command line:

$ kubectl create secret generic superuser-password \

--from-literal=password=secret-value

secret/superuser-password created

Instead of creating a Kubernetes Secret with kubectl, you can manually base64 encode a string on the command line, and then add the encoded output to a Secrets manifest.

Cloud providers