This is the multi-page printable view of this section.

Click here to print.

Return to the regular view of this page.

Clustering algorithms

Clustering is an important and popular machine learning tool used to find clusters of items in a data set that are similar to one another.

Clustering is an important and popular machine learning tool used to find clusters of items in a data set that are similar to one another. The goal of clustering is to create clusters with a high number of objects that are similar. Similar to classification, clustering segments the data. However, in clustering, the categorical groups are not defined.

Clustering data into related groupings has many useful applications. If you already know how many clusters your data contains, the K-means algorithm may be sufficient to train your model and use that model to predict cluster membership for new data points.

However, in the more common case, you do not know before analyzing the data how many clusters it contains. In these cases, the Bisecting k-means algorithm is much more effective at finding the correct clusters in your data.

Both k-means and bisecting k-means predict the clusters for a given data set. A model trained using either algorithm can then be used to predict the cluster to which new data points are assigned.

Clustering can be used to find anomalies in data and find natural groups of data. For example, you can use clustering to analyze a geographical region and determine which areas of that region are most likely to be hit by an earthquake. For a complete example, see Earthquake Cluster Analysis Using the KMeans Approach.

In Vertica, clustering is computed based on Euclidean distance. Through this computation, data points are assigned to the cluster with the nearest center.

1 - K-means

You can use the k-means clustering algorithm to cluster data points into k different groups based on similarities between the data points.

You can use the k-means clustering algorithm to cluster data points into k different groups based on similarities between the data points.

k-means partitions n observations into k clusters. Through this partitioning, k-means assigns each observation to the cluster with the nearest mean, or cluster center.

For a complete example of how to use k-means on a table in Vertica, see Clustering data using k-means .

1.1 - Clustering data using k-means

This k-means example uses two small data sets: agar_dish_1 and agar_dish_2.

This k-means example uses two small data sets: agar_dish_1 and agar_dish_2. Using the numeric data in the agar_dish_1 data set, you can cluster the data into k clusters. Then, using the created k-means model, you can run APPLY_KMEANS on agar_dish_2 and assign them to the clusters created in your original model.

Before you begin the example,

load the Machine Learning sample data.

Clustering training data into k clusters

-

Create the k-means model, named agar_dish_kmeans using the agar_dish_1 table data.

=> SELECT KMEANS('agar_dish_kmeans', 'agar_dish_1', '*', 5

USING PARAMETERS exclude_columns ='id', max_iterations=20, output_view='agar_1_view',

key_columns='id');

KMEANS

---------------------------

Finished in 7 iterations

(1 row)

The example creates a model named agar_dish_kmeans and a view containing the results of the model named agar_1_view. You might get different results when you run the clustering algorithm. This is because KMEANS randomly picks initial centers by default.

-

View the output of agar_1_view.

=> SELECT * FROM agar_1_view;

id | cluster_id

-----+------------

2 | 4

5 | 4

7 | 4

9 | 4

13 | 4

.

.

.

(375 rows)

-

Because you specified the number of clusters as 5, verify that the function created five clusters. Count the number of data points within each cluster.

=> SELECT cluster_id, COUNT(cluster_id) as Total_count

FROM agar_1_view

GROUP BY cluster_id;

cluster_id | Total_count

------------+-------------

0 | 76

2 | 80

1 | 74

3 | 73

4 | 72

(5 rows)

From the output, you can see that five clusters were created: 0, 1, 2, 3, and 4.

You have now successfully clustered the data from agar_dish_1.csv into five distinct clusters.

Summarizing your model

View the summary output of agar_dish_means using the GET_MODEL_SUMMARY function.

=> SELECT GET_MODEL_SUMMARY(USING PARAMETERS model_name='agar_dish_kmeans');

----------------------------------------------------------------------------------

=======

centers

=======

x | y

--------+--------

0.49708 | 0.51116

-7.48119|-7.52577

-1.56238|-1.50561

-3.50616|-3.55703

-5.52057|-5.49197

=======

metrics

=======

Evaluation metrics:

Total Sum of Squares: 6008.4619

Within-Cluster Sum of Squares:

Cluster 0: 12.083548

Cluster 1: 12.389038

Cluster 2: 12.639238

Cluster 3: 11.210146

Cluster 4: 12.994356

Total Within-Cluster Sum of Squares: 61.316326

Between-Cluster Sum of Squares: 5947.1456

Between-Cluster SS / Total SS: 98.98%

Number of iterations performed: 2

Converged: True

Call:

kmeans('public.agar_dish_kmeans', 'agar_dish_1', '*', 5

USING PARAMETERS exclude_columns='id', max_iterations=20, epsilon=0.0001, init_method='kmeanspp',

distance_method='euclidean', output_view='agar_view_1', key_columns='id')

(1 row)

Clustering data using a k-means model

Using agar_dish_kmeans, the k-means model you just created, you can assign the points in agar_dish_2 to cluster centers.

Create a table named kmeans_results, using the agar_dish_2 table as your input table and the agar_dish_kmeans model for your initial cluster centers.

Add only the relevant feature columns to the arguments in the APPLY_KMEANS function.

=> CREATE TABLE kmeans_results AS

(SELECT id,

APPLY_KMEANS(x, y

USING PARAMETERS

model_name='agar_dish_kmeans') AS cluster_id

FROM agar_dish_2);

The kmeans_results table shows that the agar_dish_kmeans model correctly clustered the agar_dish_2 data.

See also

2 - K-prototypes

You can use the k-prototypes clustering algorithm to cluster mixed data into different groups based on similarities between the data points.

You can use the k-prototypes clustering algorithm to cluster mixed data into different groups based on similarities between the data points. The k-prototypes algorithm extends the functionality of k-means clustering, which is limited to numerical data, by combining it with k-modes clustering, a clustering algorithm for categorical data.

See the syntax for k-prototypes here.

3 - Bisecting k-means

The bisecting k-means clustering algorithm combines k-means clustering with divisive hierarchy clustering.

The bisecting k-means clustering algorithm combines k-means clustering with divisive hierarchy clustering. With bisecting k-means, you get not only the clusters but also the hierarchical structure of the clusters of data points.

This hierarchy is more informative than the unstructured set of flat clusters returned by K-means. The hierarchy shows how the clustering results would look at every step of the process of bisecting clusters to find new clusters. The hierarchy of clusters makes it easier to decide the number of clusters in the data set.

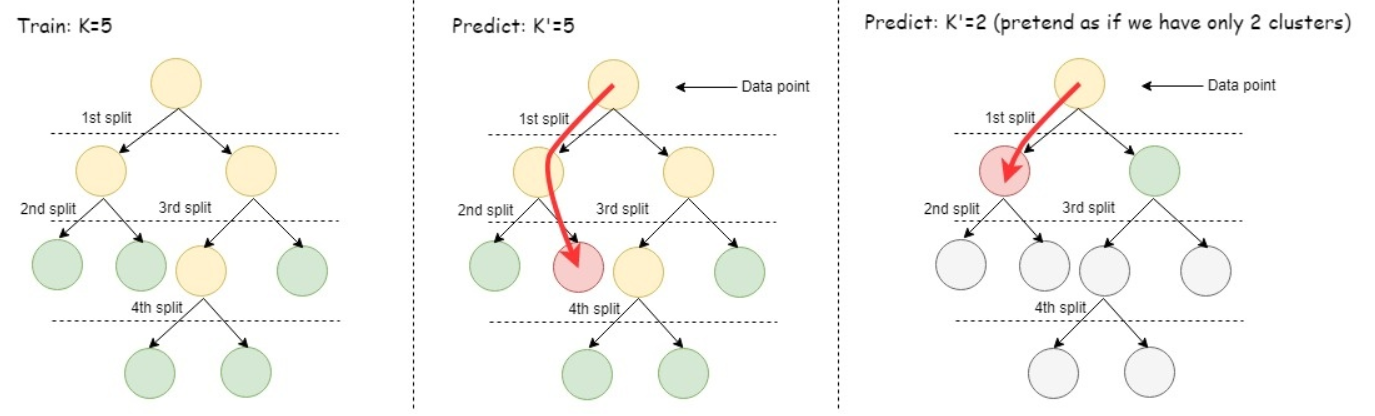

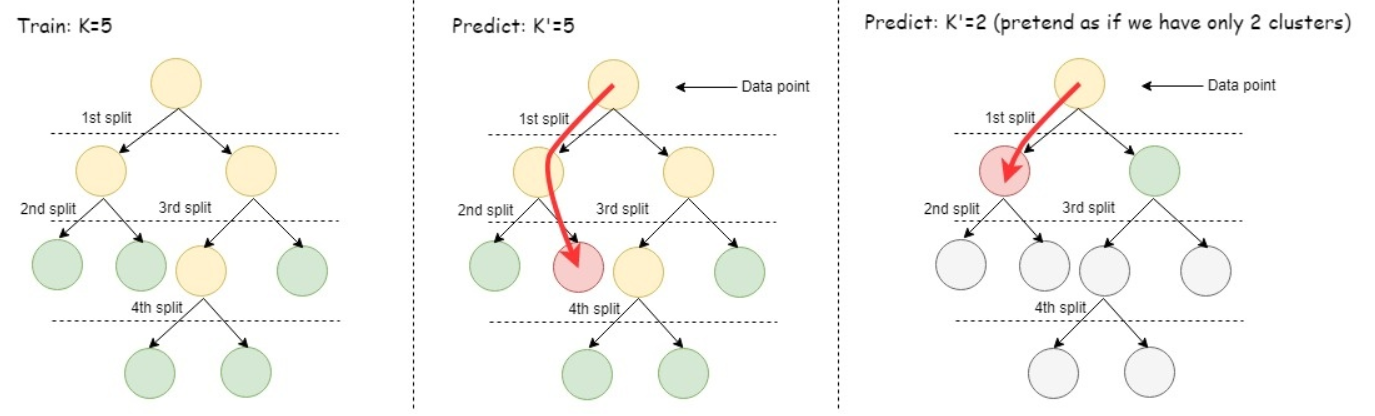

Given a hierarchy of k clusters produced by bisecting k-means, you can easily calculate any prediction of the form: Assume the data contain only k' clusters, where k' is a number that is smaller than or equal to the k used to train the model.

For a complete example of how to use bisecting k-means to analyze a table in Vertica, see Clustering data hierarchically using bisecting k-means.

3.1 - Clustering data hierarchically using bisecting k-means

This bisecting k-means example uses two small data sets named agar_dish_training and agar_dish_testing.

This bisecting k-means example uses two small data sets named agar_dish_training and agar_dish_testing. Using the numeric data in the agar_dish_training data set, you can cluster the data into k clusters. Then, using the resulting bisecting k-means model, you can run APPLY_BISECTING_KMEANS on agar_dish_testing and assign the data to the clusters created in your trained model. Unlike regular k-means (also provided in Vertica), bisecting k-means allows you to predict with any number of clusters less than or equal to k. So if you train the model with k=5 but later decide to predict with k=2, you do not have to retrain the model; just run APPLY_BISECTING_KMEANS with k=2.

Before you begin the example, load the Machine Learning sample data. For this example, we load agar_dish_training.csv and agar_dish_testing.csv.

Clustering training data into k clusters to train the model

-

Create the bisecting k-means model, named agar_dish_bkmeans, using the agar_dish_training table data.

=> SELECT BISECTING_KMEANS('agar_dish_bkmeans', 'agar_dish_training', '*', 5 USING PARAMETERS exclude_columns='id', key_columns='id', output_view='agar_1_view');

BISECTING_KMEANS

------------------

Finished.

(1 row)

This example creates a model named agar_dish_bkmeans and a view containing the results of the model named agar_1_view. You might get slightly different results when you run the clustering algorithm. This is because BISECTING_KMEANS uses random numbers to generate the best clusters.

-

View the output of agar_1_view.

=> SELECT * FROM agar_1_view;

id | cluster_id

-----+------------

2 | 4

5 | 4

7 | 4

9 | 4

...

Here we can see the id of each point in the agar_dish_training table and which cluster it has been assigned to.

-

Because we specified the number of clusters as 5, verify that the function created five clusters by counting the number of data points within each cluster.

=> SELECT cluster_id, COUNT(cluster_id) as Total_count FROM agar_1_view GROUP BY cluster_id;

cluster_id | Total_count

------------+-------------

5 | 76

7 | 73

8 | 74

4 | 72

6 | 80

(5 rows)

You may wonder why the cluster_ids do not start at 0 or 1. The reason is that the bisecting k-means algorithm generates many more clusters than k-means, and then outputs the ones that are needed for the designated value of k. We will see later why this is useful.

You have now successfully clustered the data from agar_dish_training.csv into five distinct clusters.

Summarizing your model

View the summary output of agar_dish_bkmeans using the GET_MODEL_SUMMARY function.

```

=> SELECT GET_MODEL_SUMMARY(USING PARAMETERS model_name='agar_dish_bkmeans');

======

BKTree

======

center_id| x | y | withinss |totWithinss|bisection_level|cluster_size|parent|left_child|right_child

---------+--------+--------+----------+-----------+---------------+------------+------+----------+-----------

0 |-3.59450|-3.59371|6008.46192|6008.46192 | 0 | 375 | | 1 | 2

1 |-6.47574|-6.48280|336.41161 |1561.29110 | 1 | 156 | 0 | 5 | 6

2 |-1.54210|-1.53574|1224.87949|1561.29110 | 1 | 219 | 0 | 3 | 4

3 |-2.54088|-2.53830|317.34228 | 665.83744 | 2 | 147 | 2 | 7 | 8

4 | 0.49708| 0.51116| 12.08355 | 665.83744 | 2 | 72 | 2 | |

5 |-7.48119|-7.52577| 12.38904 | 354.80922 | 3 | 76 | 1 | |

6 |-5.52057|-5.49197| 12.99436 | 354.80922 | 3 | 80 | 1 | |

7 |-1.56238|-1.50561| 12.63924 | 61.31633 | 4 | 73 | 3 | |

8 |-3.50616|-3.55703| 11.21015 | 61.31633 | 4 | 74 | 3 | |

=======

Metrics

=======

Measure | Value

-----------------------------------------------------+----------

Total sum of squares |6008.46192

Total within-cluster sum of squares | 61.31633

Between-cluster sum of squares |5947.14559

Between-cluster sum of squares / Total sum of squares| 98.97950

Sum of squares for cluster 1, center_id 5 | 12.38904

Sum of squares for cluster 2, center_id 6 | 12.99436

Sum of squares for cluster 3, center_id 7 | 12.63924

Sum of squares for cluster 4, center_id 8 | 11.21015

Sum of squares for cluster 5, center_id 4 | 12.08355

===========

call_string

===========

bisecting_kmeans('agar_dish_bkmeans', 'agar_dish_training', '*', 5

USING PARAMETERS exclude_columns='id', bisection_iterations=1, split_method='SUM_SQUARES', min_divisible_cluster_size=2, distance_method='euclidean', kmeans_center_init_method='kmeanspp', kmeans_epsilon=0.0001, kmeans_max_iterations=10, output_view=''agar_1_view'', key_columns=''id'')

===============

Additional Info

===============

Name |Value

---------------------+-----

num_of_clusters | 5

dimensions_of_dataset| 2

num_of_clusters_found| 5

height_of_BKTree | 4

(1 row)

```

Here we can see the details of all the intermediate clusters created by bisecting k-means during training, some metrics for evaluating the quality of the clustering (the lower the sum of squares, the better), the specific parameters with which the algorithm was trained, and some general information about the data algorithm.

Clustering testing data using a bisecting k-means model

Using agar_dish_bkmeans, the bisecting k-means model you just created, you can assign the points in agar_dish_testing to cluster centers.

-

Create a table named bkmeans_results, using the agar_dish_testing table as your input table and the agar_dish_bkmeans model for your cluster centers. Add only the relevant feature columns to the arguments in the APPLY_BISECTING_KMEANS function.

=> CREATE TABLE bkmeans_results_k5 AS

(SELECT id,

APPLY_BISECTING_KMEANS(x, y

USING PARAMETERS

model_name='agar_dish_bkmeans', number_clusters=5) AS cluster_id

FROM agar_dish_testing);

=> SELECT cluster_id, COUNT(cluster_id) as Total_count FROM bkmeans_results_k5 GROUP BY cluster_id;

cluster_id | Total_count

------------+-------------

5 | 24

4 | 28

6 | 20

8 | 26

7 | 27

(5 rows)

The bkmeans_results_k5 table shows that the agar_dish_bkmeans model correctly clustered the agar_dish_testing data.

-

The real advantage of using bisecting k-means is that the model it creates can cluster data into any number of clusters less than or equal to the k with which it was trained. Now you could cluster the above testing data into 3 clusters instead of 5, without retraining the model:

=> CREATE TABLE bkmeans_results_k3 AS

(SELECT id,

APPLY_BISECTING_KMEANS(x, y

USING PARAMETERS

model_name='agar_dish_bkmeans', number_clusters=3) AS cluster_id

FROM agar_dish_testing);

=> SELECT cluster_id, COUNT(cluster_id) as Total_count FROM bkmeans_results_k3 GROUP BY cluster_id;

cluster_id | Total_count

------------+-------------

4 | 28

3 | 53

1 | 44

(3 rows)

Prediction using the trained bisecting k-means model

To cluster data using a trained model, the bisecting k-means algorithm starts by comparing the incoming data point with the child cluster centers of the root cluster node. The algorithm finds which of those centers the data point is closest to. Then the data point is compared with the child cluster centers of the closest child of the root. The prediction process continues to iterate until it reaches a leaf cluster node. Finally, the point is assigned to the closest leaf cluster. The following picture gives a simple illustration of the training process and prediction process of the bisecting k-means algorithm. An advantage of using bisecting k-means is that you can predict using any value of k from 2 to the largest k value the model was trained on.

The model in the picture below was trained on k=5. The middle picture shows using the model to predict with k=5, in other words, match the incoming data point to the center with the closest value, in the level of the hierarchy where there are 5 leaf clusters. The picture on the right shows using the model to predict as if k=2, in other words, first compare the incoming data point to the leaf clusters at the level where there were only two clusters, then match the data point to the closer of those two cluster centers. This approach is faster than predicting with k-means.

See also